The effects of various levels of sampling and quantization on an image were observed. Mean squared error was computed to measure quality of the processed image with respect to original image.

Images were subjected to point operations in order to enhance it. Also, the histogram of images were computed, analyzed and equalized in-order to improve the contrast of the image.

Various spatial filters were applied to the images and the results were analyzed. The images were sharpened in order to enhance the edges.

A modified version of Gaussian filter called Bilateral filter was implemented and the results were compared with Gaussian Filters. Morphological filters like erosion and dilation were applied to the images and tested.

Canny edge detection was implemented and the results were compared with Sobel edge detection. Images were analyzed and filtered based on the noise present in the image.

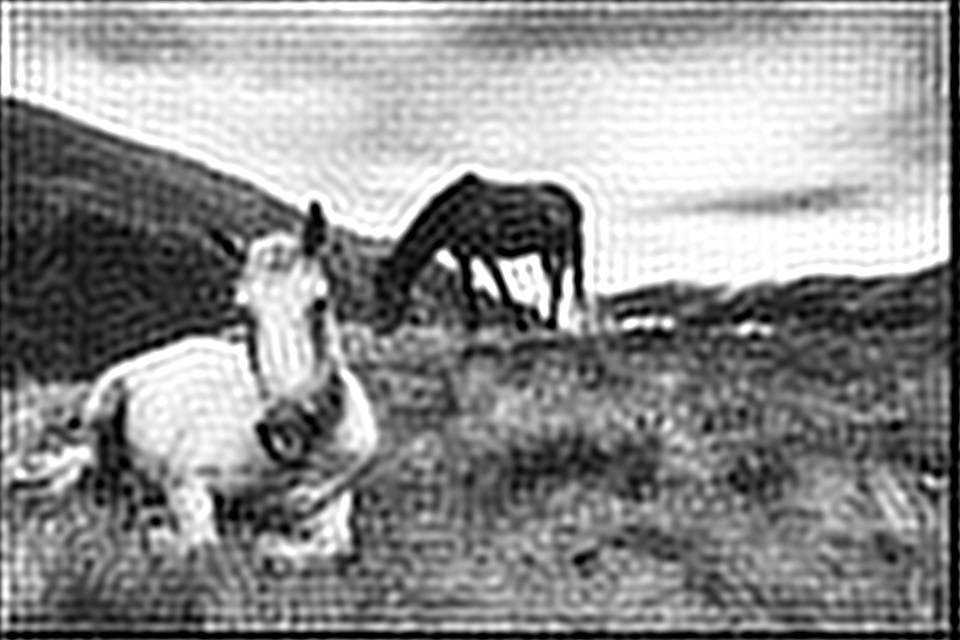

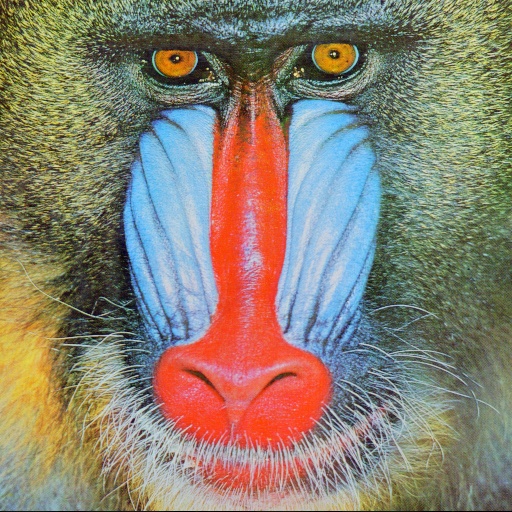

Deconvolution was performed using inverse, pseudo-inverse and wiener filters. The filters were tested on images with gaussian blur, motion blur and additive gaussian noise.

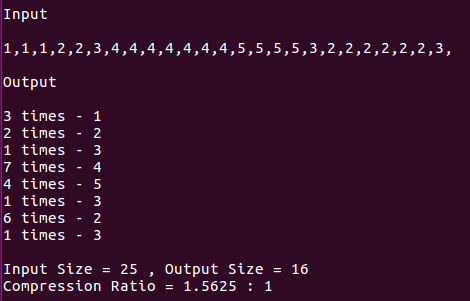

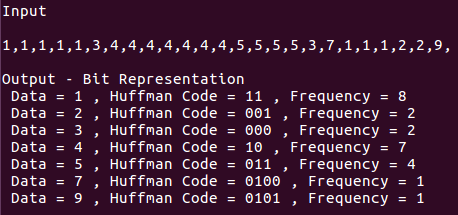

Run length encoding and Huffman encoding were implemented on a given dataset and the resulting compression effects were observed and analyzed

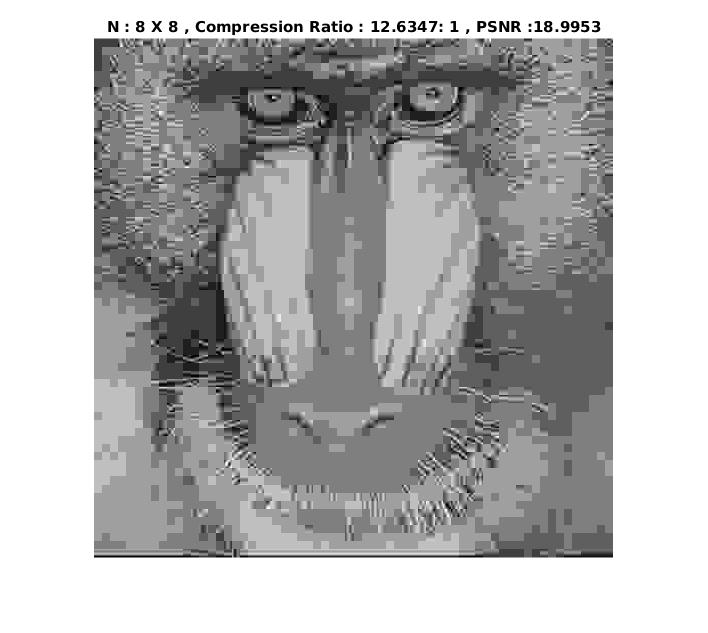

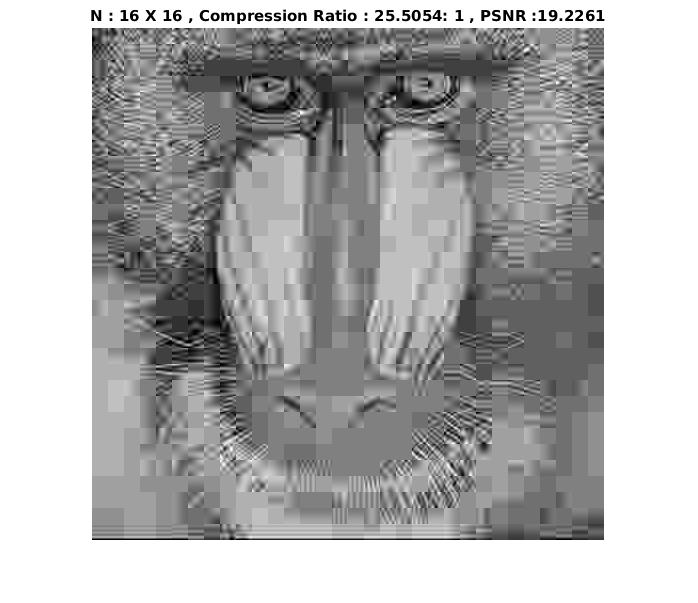

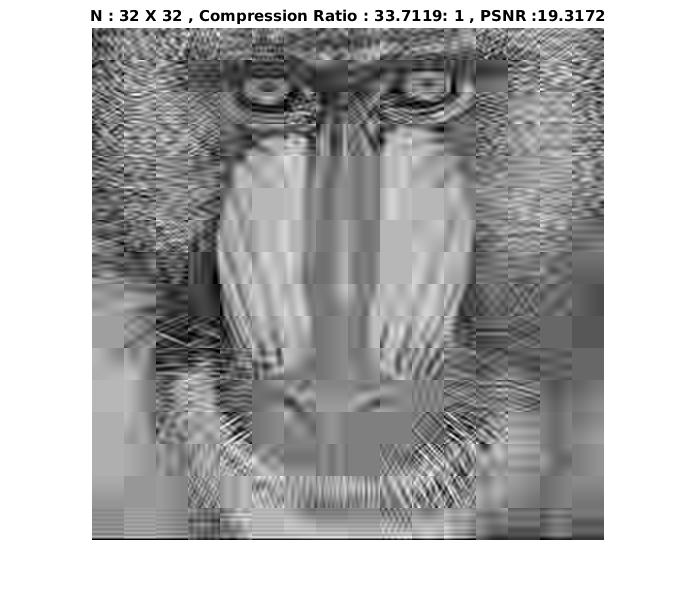

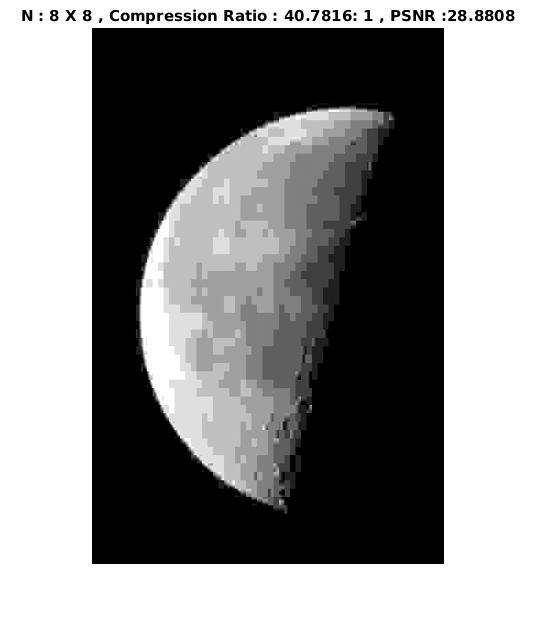

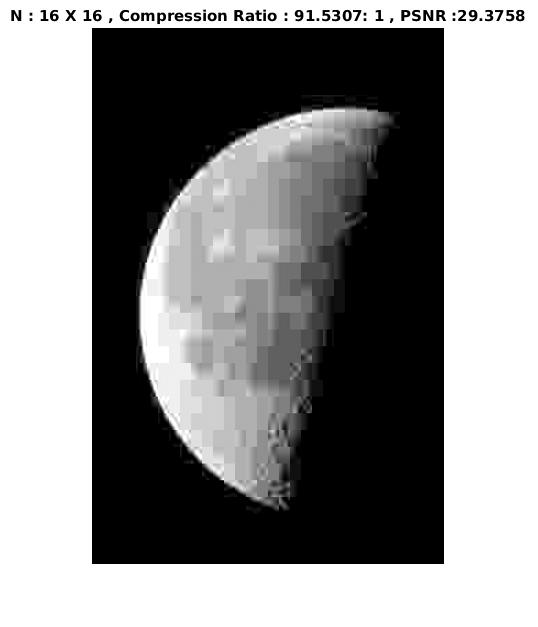

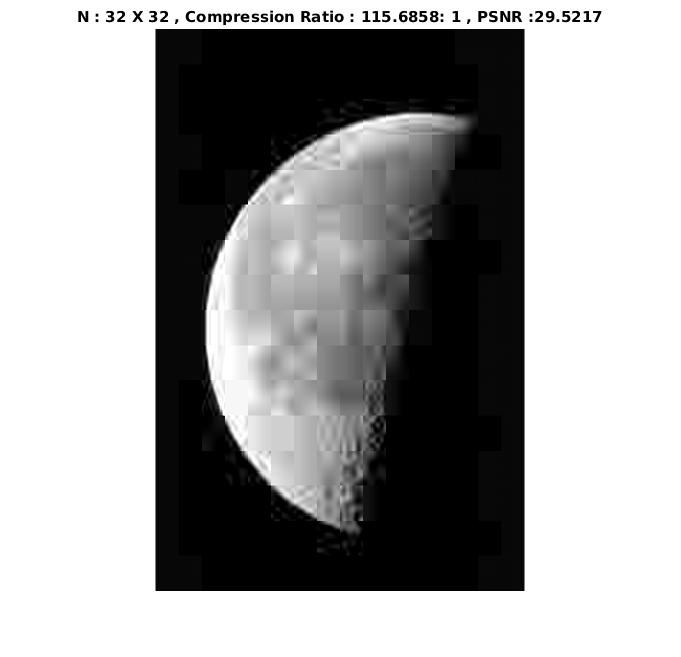

Block Based Discrete Cosine Transforms were used to analyze images for use in compression. Blurred Images were partially restored using pseudo inverse filters.

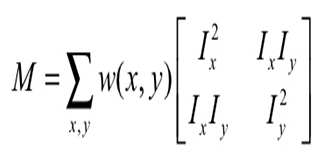

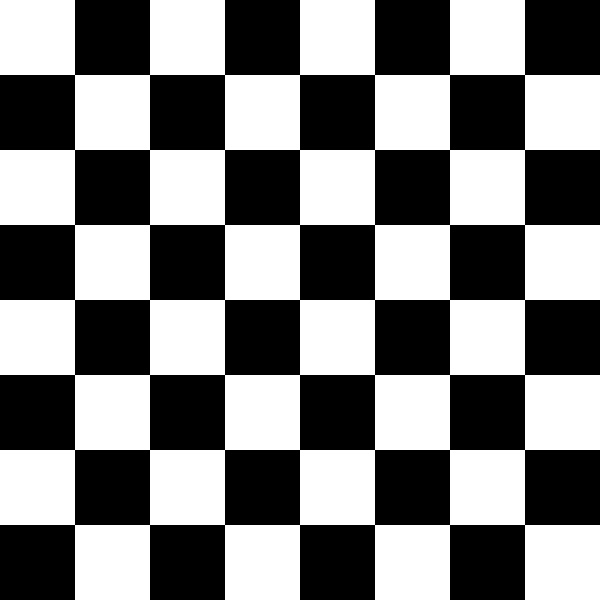

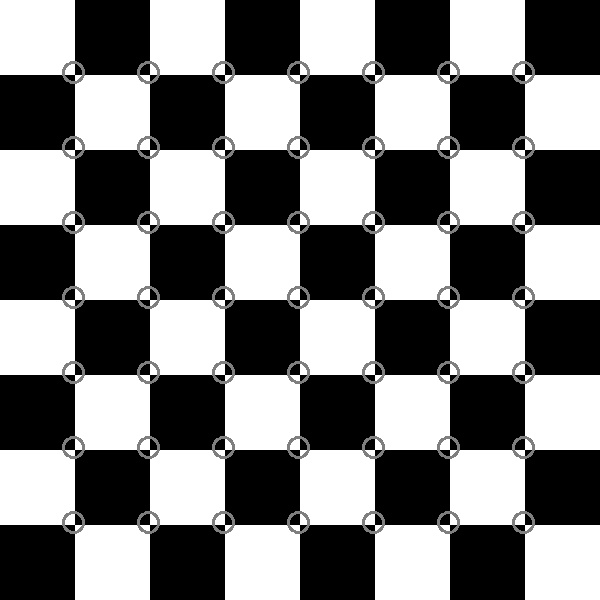

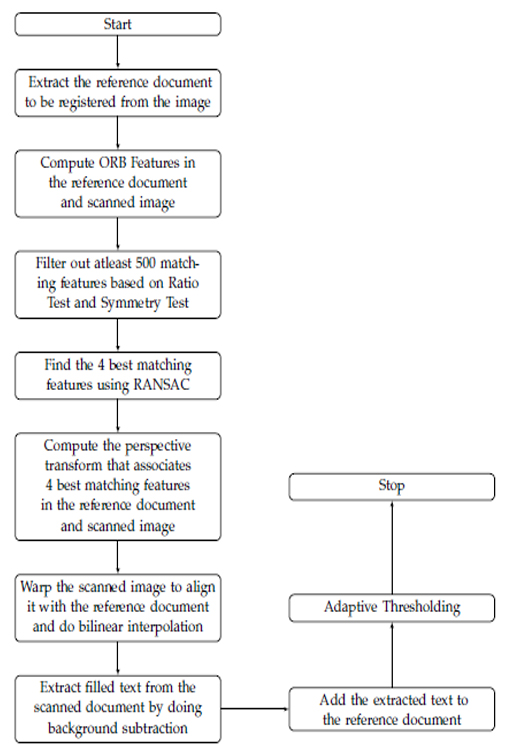

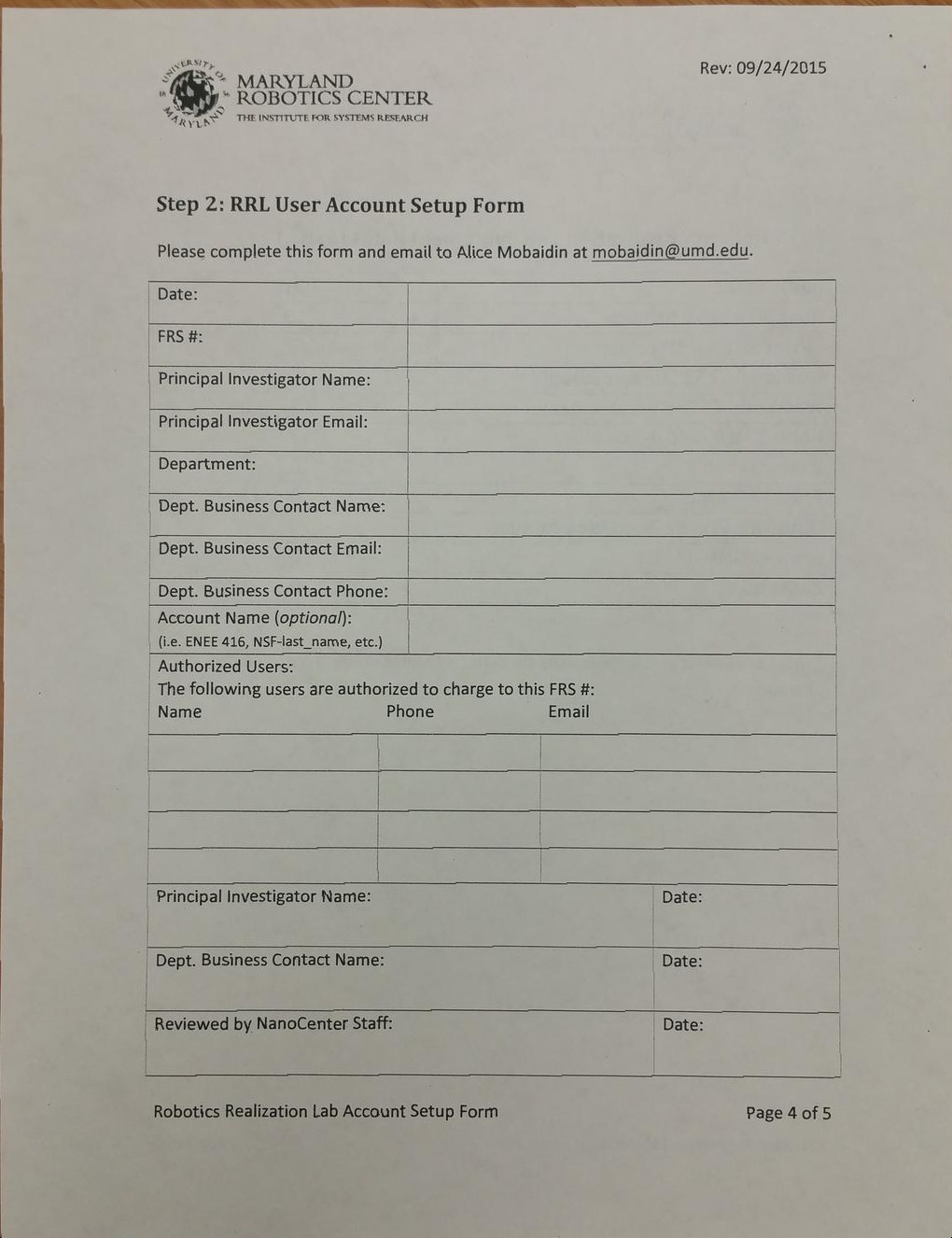

Adaptive Thresholding was implemented and the results were compared with Global Thresholding. Corner features in an image were extracted using Harris Corner Detection method.

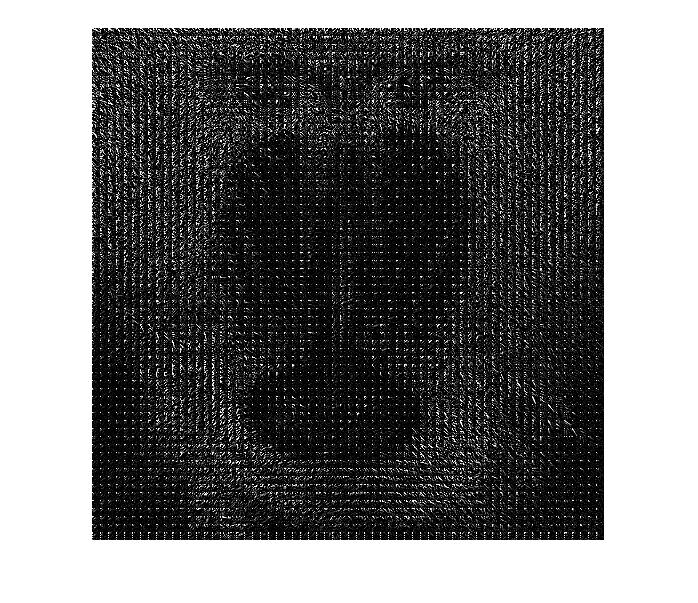

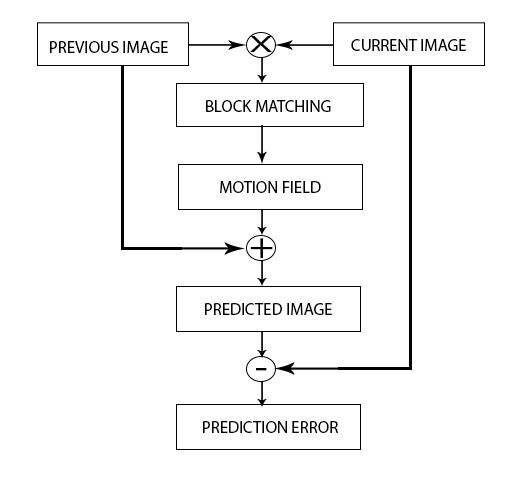

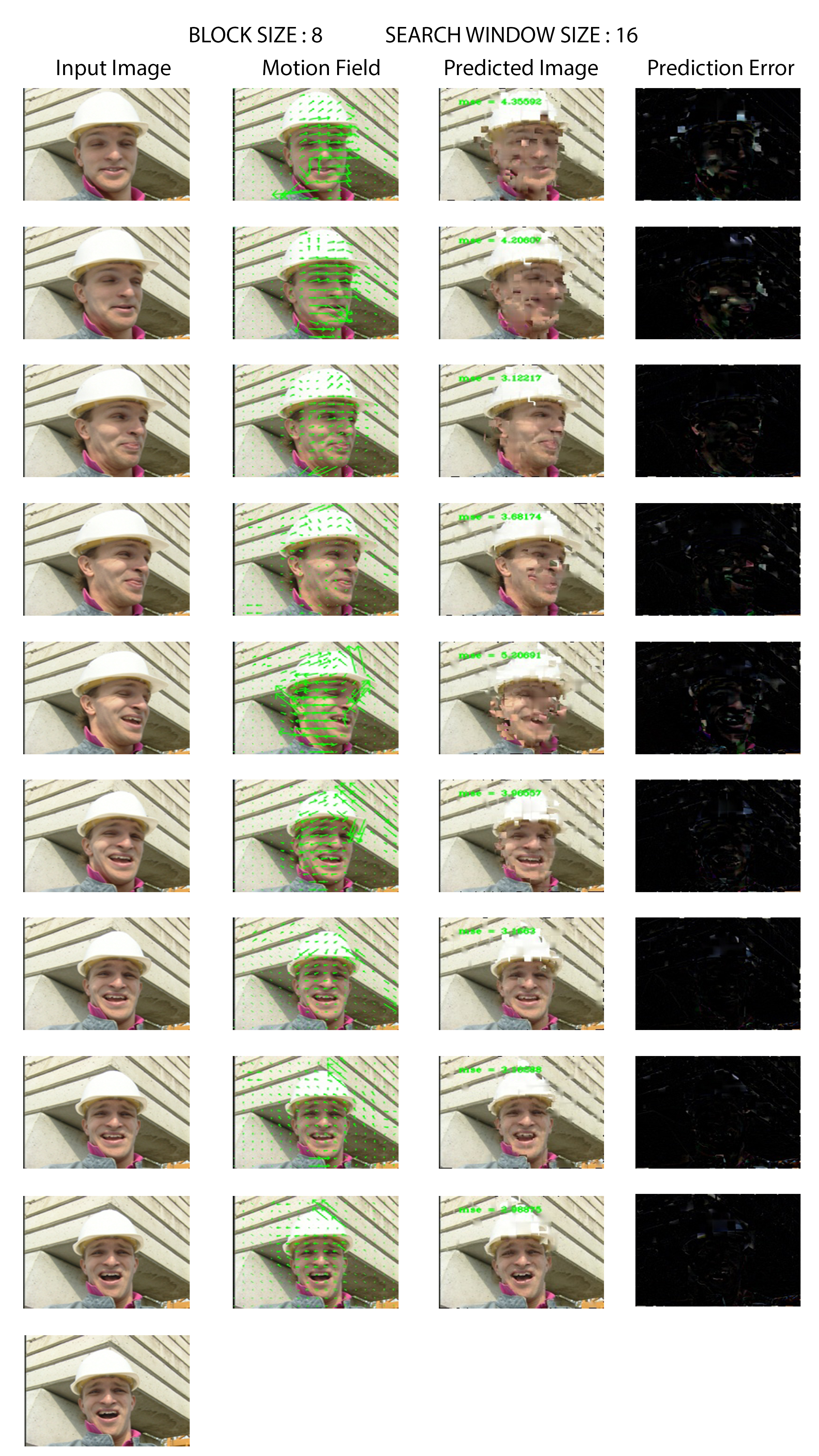

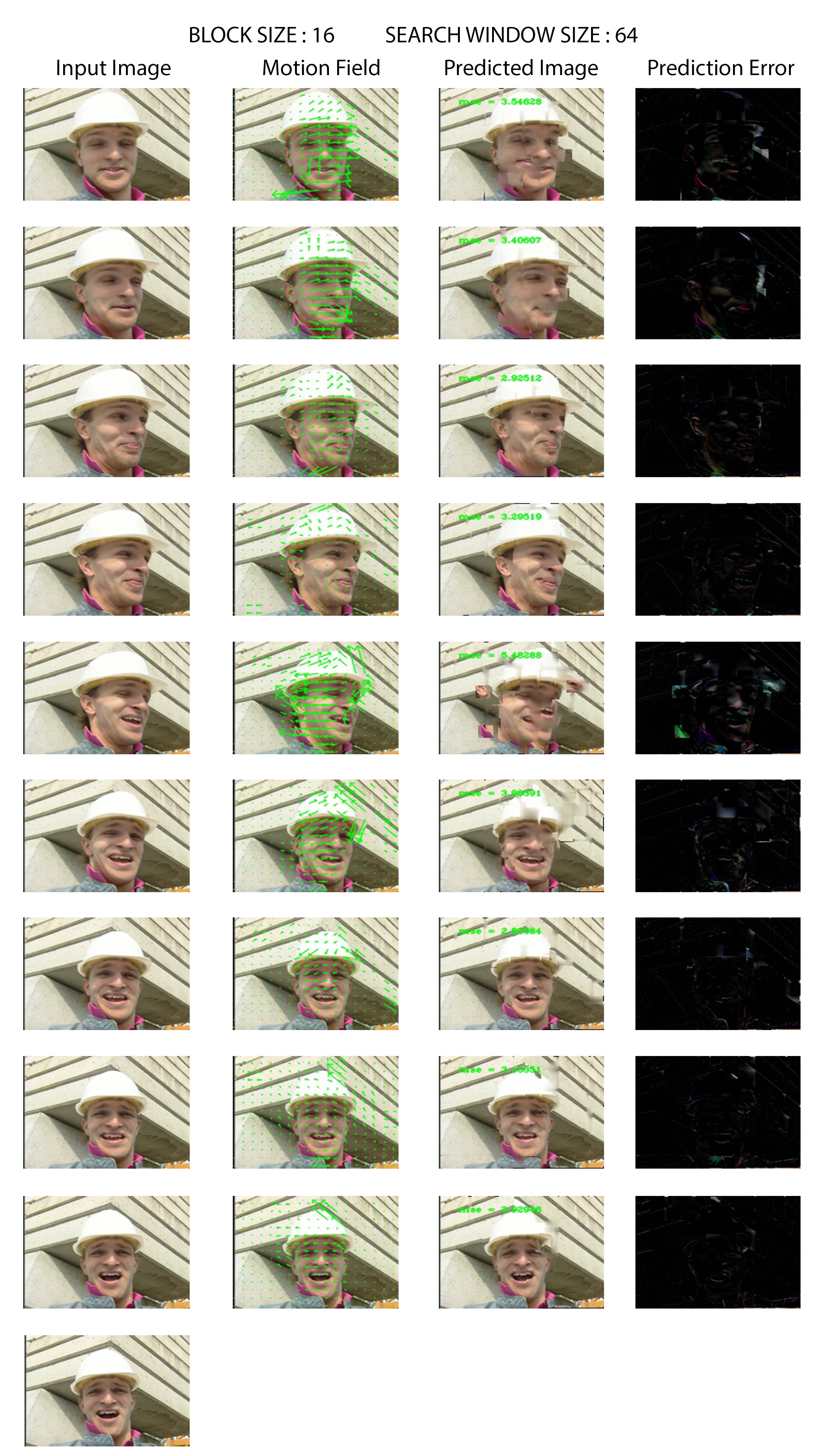

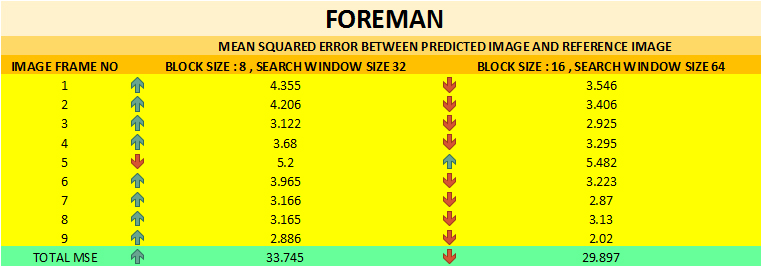

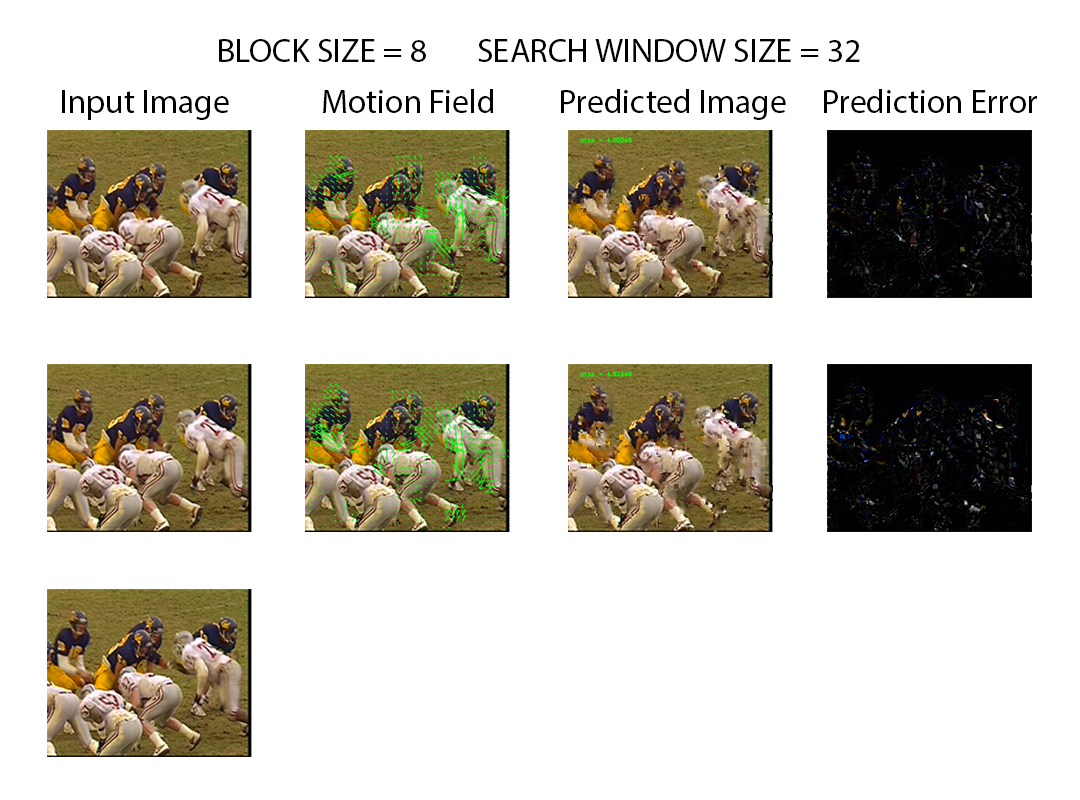

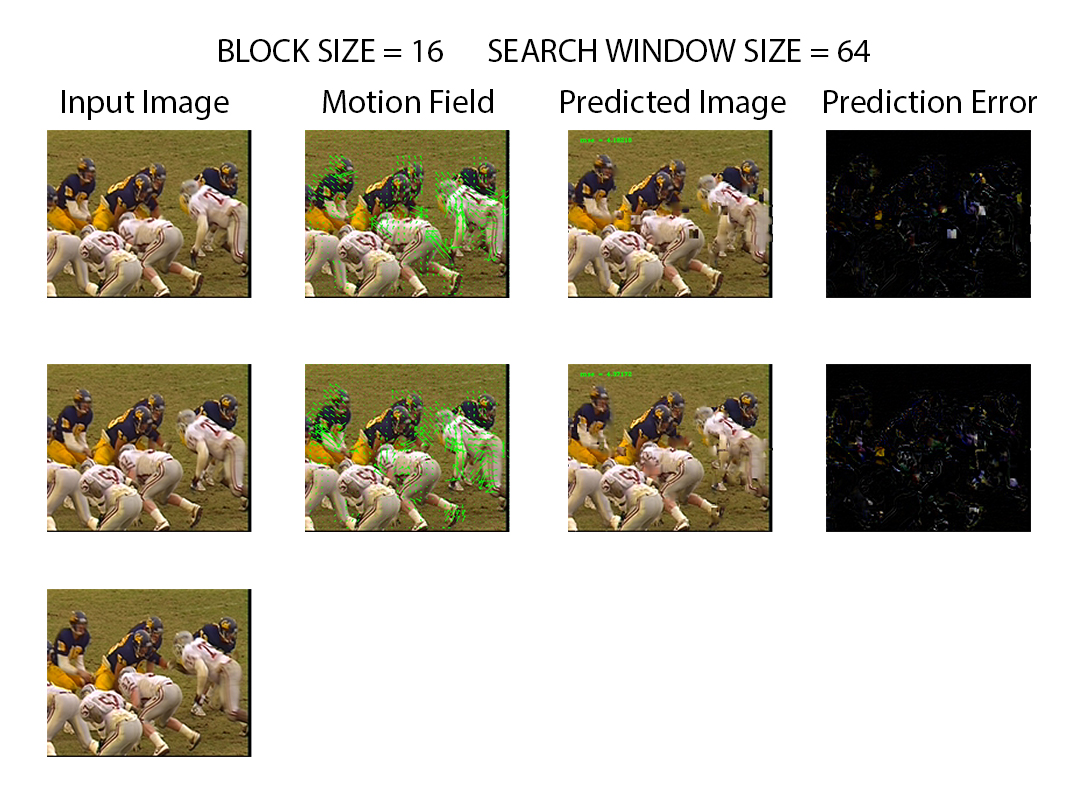

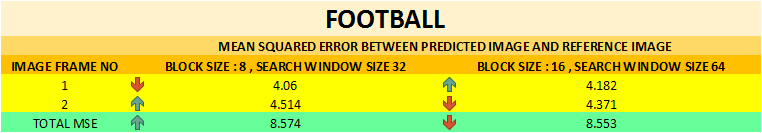

Block Matching algorithm was implemented to estimate the motion between consecutive frames and compensate the motion in-order to compress the images. Various images were tested with different block sizes and search window sizes.

Sampling is the process by which the values of the image at all the points on the rectangular grid of the image plane are obtained. They can be done at various frequencies/resolutions. However when the sampling rate (resolution) is below the nyquist rate, the information can be lost. Below you can see the picture of mickey mouse at various resolutions ( 640 X 480, 320 X 240, 32 X 24 ). It can be observed that at the resolution of 32 X 24 the figure of mickey mouse is not clear and hence the information has been lost due to aliasing.

#include < stdio.h >

#include < opencv2/opencv.hpp >

using namespace cv;

int main(int argc, char** argv )

{

Mat image;

image = imread( "../images/mickey.jpg", 1 );

cv::Mat resized_image1 = cv::Mat(240, 320, CV_8UC3, cv::Scalar(0, 0, 0)) ;

cv::Mat resized_image2 = cv::Mat(24, 32, CV_8UC3, cv::Scalar(0, 0, 0)) ;

int rows = 600;

int cols = 800;

resize(image,resized_image1,resized_image1.size(),0,0);

resize(image,resized_image2,resized_image2.size(),0,0);

if ( !image.data )

{

printf("No image data \n");

return -1;

}

namedWindow("Original Image", WINDOW_NORMAL);

resizeWindow("Original Image",rows,cols);

imwrite( "../results/sampling/original_image.jpg", image );

imshow("Original Image", image);

namedWindow("320X240 Image", WINDOW_NORMAL );

resizeWindow("320X240 Image",rows,cols);

imwrite( "../results/sampling/320_x_240_image.jpg", resized_image1);

imshow("320X240 Image", resized_image1);

namedWindow("32X24 Image", WINDOW_NORMAL );

resizeWindow("32X24 Image",rows,cols);

imwrite( "../results/sampling/32_x_24_image.jpg", resized_image2 );

imshow("32X24 Image", resized_image2);

waitKey(0);

return 0;

}

Quantization is the process by which the sampled values of the image are approximated based on the desired number of distinct values. As the desired number of distinct values decreases, the quality of the image also decreases. The quality of the images can be determined using the Average Mean Squared Error, which is the average of squared difference of pixel luminance between the original image and the image with reduced number of distinct values. Below you can see the picture of avengers at various quantizations per channel ( 8-bit, 4-bit, 2-bit, 1-bit ). It can be observed that the mean squared error increases as the image is more approximated, resulting in loss of quality.

#include < stdio.h >

#include < opencv2/opencv.hpp >

#include < math.h >

using namespace cv;

Mat quantize(const Mat& input_image,int number_of_bits)

{

Mat quantized_image = input_image.clone();

uchar masked_bit = 0xFF;

masked_bit = masked_bit << (8 - number_of_bits);

for(int j = 0; j < quantized_image.rows; j++)

for(int i = 0; i < quantized_image.cols; i++)

{

Vec3b rgb_value = quantized_image.at< Vec3b >(j, i);

rgb_value[0] = rgb_value[0] & masked_bit;

rgb_value[1] = rgb_value[1] & masked_bit;

rgb_value[2] = rgb_value[2] & masked_bit;

quantized_image.at< Vec3b >(j, i) = rgb_value;

}

return quantized_image;

}

float mean_squared_error(const Mat& image_1, const Mat& image_2)

{

int m = image_1.rows;

int n = image_1.cols;

float mse = 0.0;

for(int j = 0; j < m ; j++)

for(int i = 0; i < n; i++)

{

Vec3b rgb_value_1 = image_1.at< Vec3b >(j, i);

Vec3b rgb_value_2 = image_2.at< Vec3b >(j, i);

mse += abs(rgb_value_1[0]-rgb_value_2[0])^2 + abs(rgb_value_1[1]-rgb_value_2[1])^2 + abs(rgb_value_1[2]-rgb_value_2[2])^2 ;

}

return mse/(m*n*3);

}

int main(int argc, char** argv )

{

Mat image, image_4_bit, image_2_bit, image_1_bit;

image = imread( "../images/avengers.jpg", 1 );

if ( !image.data )

{

printf("No image data \n");

return -1;

}

image_4_bit = quantize(image,4);

image_2_bit = quantize(image,2);

image_1_bit = quantize(image,1);

float mse_4_bit, mse_2_bit, mse_1_bit;

mse_4_bit = mean_squared_error(image,image_4_bit);

mse_2_bit = mean_squared_error(image,image_2_bit);

mse_1_bit = mean_squared_error(image,image_1_bit);

std::ostringstream str_4_bit, str_2_bit,str_1_bit;

str_4_bit << "MSE of 4 Bit Image = " << mse_4_bit;

str_2_bit << "MSE of 2 Bit Image = " << mse_2_bit;

str_1_bit << "MSE of 1 Bit Image = " << mse_1_bit;

namedWindow("Original Image", WINDOW_AUTOSIZE);

imwrite( "../results/quantization/original_image.jpg", image );

imshow("Original Image", image);

namedWindow("4 bit Image", WINDOW_AUTOSIZE);

putText(image_4_bit, str_4_bit.str(), cvPoint(30,30),

FONT_HERSHEY_COMPLEX_SMALL, 0.8, cvScalar(200,200,250), 1, CV_AA);

imwrite( "../results/quantization/4_bit_image.jpg", image_4_bit);

imshow("4 bit Image", image_4_bit);

namedWindow("2 bit Image", WINDOW_AUTOSIZE);

putText(image_2_bit, str_2_bit.str(), cvPoint(30,30),

FONT_HERSHEY_COMPLEX_SMALL, 0.8, cvScalar(200,200,250), 1, CV_AA);

imwrite( "../results/quantization/2_bit_image.jpg", image_2_bit);

imshow("2 bit Image", image_2_bit);

namedWindow("1 bit Image", WINDOW_AUTOSIZE);

putText(image_1_bit, str_1_bit.str(), cvPoint(30,30),

FONT_HERSHEY_COMPLEX_SMALL, 0.8, cvScalar(200,200,250), 1, CV_AA);

imwrite( "../results/quantization/1_bit_image.jpg", image_1_bit);

imshow("1 bit Image", image_1_bit);

waitKey(0);

return 0;

}

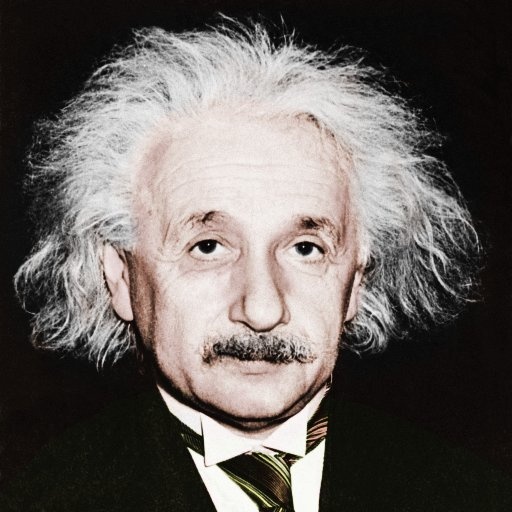

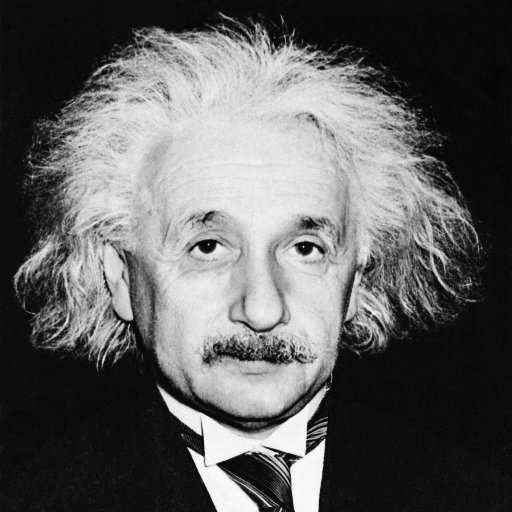

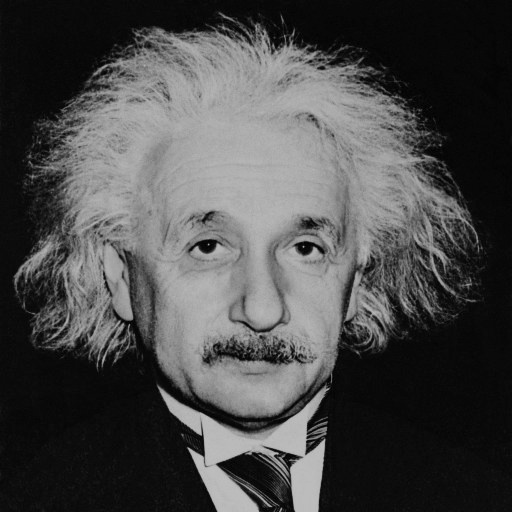

Point Operations are memoryless operations that functionally maps the intensity levels of an image to another level. This can be used to enhance the appearance of the image and feature extraction. Some of the point operations done on the image of Einstein are shown below.

RGB images can be converted to grayscale by taking a proportion of the value in each color channel.

Inverse operation gives the negative of the image.

Log operations ,on the image intensities, non-linearly transforms the image .

Gamma correction can be done to compensate for the non-linearities in brightness of various displays.

#include < stdio.h >

#include < opencv2/opencv.hpp >

#include < math.h >

#define R_WEIGHT 0.2989

#define G_WEIGHT 0.5870

#define B_WEIGHT 0.1140

#define TRANSFORMATION_CONSTANT 25

#define GAMMA 0.95

using namespace cv;

Mat convert_to_grayscale(const Mat& input_image)

{

Mat rgb_image = input_image.clone();

Mat grayscale_image = cv::Mat(rgb_image.rows, rgb_image.cols, CV_8UC1, cv::Scalar(0, 0, 0));

for(int j = 0; j < grayscale_image.rows; j++)

for(int i = 0; i < grayscale_image.cols; i++)

{

Vec3b rgb_value = rgb_image.at< Vec3b >(j, i);

grayscale_image.at< uchar >(j,i) = R_WEIGHT*rgb_value[0] + G_WEIGHT*rgb_value[1] + B_WEIGHT*rgb_value[2] ;

}

return grayscale_image;

}

Mat log_transformation(const Mat& input_image)

{

Mat log_image = input_image.clone();

for(int j = 0; j < log_image.rows ; j++)

for(int i = 0; i < log_image.cols; i++)

{

log_image.at< uchar >(j,i) = TRANSFORMATION_CONSTANT*log(log_image.at< uchar >(j,i)+1);

}

return log_image;

}

Mat inverse_transformation(const Mat& input_image)

{

Mat inverse_image = input_image.clone();

for(int j = 0; j < inverse_image.rows ; j++)

for(int i = 0; i < inverse_image.cols; i++)

{

inverse_image.at< uchar >(j,i) = 255 - inverse_image.at< uchar >(j,i);

}

return inverse_image;

}

Mat gamma_correction(const Mat& input_image)

{

Mat gamma_corrected_image = input_image.clone();

for(int j = 0; j < gamma_corrected_image.rows ; j++)

for(int i = 0; i < gamma_corrected_image.cols; i++)

{

gamma_corrected_image.at< uchar >(j,i) = pow( gamma_corrected_image.at< uchar >(j,i) , GAMMA );

}

return gamma_corrected_image;

}

int main(int argc, char** argv )

{

Mat image, grayscale_image, log_image, inverse_image, gamma_corrected_image;

image = imread( "../images/einstein.jpg", 1 );

if ( !image.data )

{

printf("No image data \n");

return -1;

}

grayscale_image = convert_to_grayscale(image);

inverse_image = inverse_transformation(grayscale_image);

log_image = log_transformation(grayscale_image);

gamma_corrected_image = gamma_correction(grayscale_image);

namedWindow("RGB Image", WINDOW_AUTOSIZE);

imwrite( "../results/point_transformations/rgb_image.jpg", image );

imshow("RGB Image", image);

namedWindow("Grayscale Image", WINDOW_AUTOSIZE);

imwrite( "../results/point_transformations/grayscale_image.jpg", grayscale_image );

imshow("Grayscale Image", grayscale_image);

namedWindow("Inverse Image", WINDOW_AUTOSIZE);

imwrite( "../results/point_transformations/inverse_image.jpg", inverse_image );

imshow("Inverse Image", inverse_image);

namedWindow("Log Image", WINDOW_AUTOSIZE);

imwrite( "../results/point_transformations/log_image.jpg", log_image );

imshow("Log Image", log_image);

namedWindow("Gamma Corrected Image", WINDOW_AUTOSIZE);

imwrite( "../results/point_transformations/gamma_corrected_image.jpg", gamma_corrected_image );

imshow("Gamma Corrected Image", gamma_corrected_image);

waitKey(0);

return 0;

}

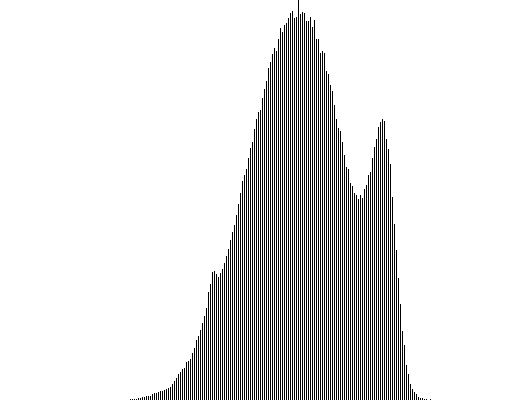

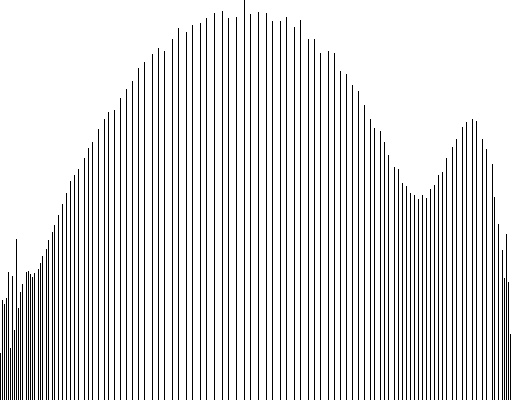

Histogram gives the number of occurences of various intensity levels in an image. It can be used to determine the quality of the image. For an image to be visually appealing, the histogram has to be spread apart rather than being concentrated in a narrow range. An image with balanced histogram would be of high contrast, hence more attractive. Images with unbalanced histograms can be equalized in order to increase the contrast. However the side effect of equalization is that a few features might be lost. In this case, the house has disappeared.

#include < opencv2/opencv.hpp >

#include < stdio.h >

#define R_WEIGHT 0.2989

#define G_WEIGHT 0.5870

#define B_WEIGHT 0.1140

using namespace cv;

Mat convert_to_grayscale(const Mat& input_image)

{

Mat rgb_image = input_image.clone();

Mat grayscale_image = cv::Mat(rgb_image.rows, rgb_image.cols, CV_8UC1, cv::Scalar(0, 0, 0));

for(int j = 0; j < grayscale_image.rows; j++)

for(int i = 0; i < grayscale_image.cols; i++)

{

Vec3b rgb_value = rgb_image.at< Vec3b >(j, i);

grayscale_image.at< uchar >(j,i) = R_WEIGHT*rgb_value[0] + G_WEIGHT*rgb_value[1] + B_WEIGHT*rgb_value[2] ;

}

return grayscale_image;

}

void compute_histogram(const Mat& input_image, int histogram[])

{

for (int i = 0 ; i <256 ; i++)

histogram[i] = 0;

// Store the frequency of intensities

for(int j = 0; j < input_image.rows; j++)

for(int i = 0; i < input_image.cols; i++)

{

histogram[input_image.at< uchar >(j,i)]++;

}

}

void display_histogram(int histogram[], const char* name)

{

int hist[256];

for(int i = 0; i < 256; i++)

{

hist[i]=histogram[i];

}

// draw the histograms

int hist_w = 512; int hist_h = 400;

int bin_w = cvRound((double) hist_w/256);

Mat histogram_image(hist_h, hist_w, CV_8UC1, Scalar(255, 255, 255));

// find the maximum intensity element from histogram

int max = hist[0];

for(int i = 1; i < 256; i++){

if(max < hist[i]){

max = hist[i];

}

}

// normalize the histogram between 0 and histImage.rows

for(int i = 0; i < 256; i++){

hist[i] = ((double)hist[i]/max)*histogram_image.rows;

}

// draw the intensity line for histogram

for(int i = 0; i < 256; i++)

{

line(histogram_image, Point(bin_w*(i), hist_h),

Point(bin_w*(i), hist_h - hist[i]),

Scalar(0,0,0), 1, 8, 0);

}

// display histogram

namedWindow(name, CV_WINDOW_AUTOSIZE);

imshow(name, histogram_image);

std::ostringstream display_string ;

display_string<<"../results/histogram_equalization/"<< name <<".jpg";

imwrite( display_string.str(), histogram_image );

}

void compute_cumulative_histogram(int histogram[], int cumulative_histogram[])

{

cumulative_histogram[0] = histogram[0];

for(int j = 1; j < 256 ; j++)

{

cumulative_histogram[j] = histogram[j]+cumulative_histogram[j-1];

}

}

int scale_histogram(int cumulative_histogram[],int scaled_histogram[], float scaling_factor)

{

for(int j = 0; j < 256 ; j++)

{

scaled_histogram[j] = cvRound(cumulative_histogram[j]*scaling_factor);

}

}

Mat equalize_image(const Mat& input_image, int histogram[])

{

int cumulative_histogram[256], scaled_histogram[256];

int image_size = input_image.rows*input_image.cols;

// Compute alpha (scaling factor) based on total number of pixels and maximum intensity

float scaling_factor = 255.0/image_size;

// Compute probability of each intensity by normalizing the histogram

double intensity_probability[256];

for(int i = 0; i < 256 ; i++)

{

intensity_probability[i] = histogram[i]/image_size;

}

// Compute the cumulative histogram by adding all values of lower intensities

compute_cumulative_histogram(histogram, cumulative_histogram);

// Scaled the cumulative histogram by the scaling factor

scale_histogram(cumulative_histogram, scaled_histogram, scaling_factor);

Mat equalized_image = input_image.clone();

// Equalize the image by looking at the value from scaled histogram at the intensity

// level at the corresponding pixel

for(int j = 0; j < equalized_image.rows; j++)

for(int i = 0; i < equalized_image.cols; i++)

{

equalized_image.at< uchar >(j,i) = saturate_cast< uchar >(scaled_histogram[equalized_image.at< uchar >(j,i)]);

}

return equalized_image;

}

int main(int argc, char** argv )

{

Mat rgb_image, grayscale_image, equalized_image;

rgb_image = imread( "../images/trees.jpg", 1 );

if ( !rgb_image.data )

{

printf("No image data \n");

return -1;

}

int histogram[256], equalized_histogram[256];

grayscale_image = convert_to_grayscale(rgb_image);

compute_histogram(grayscale_image, histogram);

equalized_image = equalize_image(grayscale_image, histogram);

compute_histogram(equalized_image, equalized_histogram);

namedWindow("Original Image", WINDOW_AUTOSIZE);

imwrite( "../results/histogram_equalization/original_image.jpg", rgb_image );

imshow("Original Image", grayscale_image);

display_histogram(histogram, "original_histogram");

namedWindow("Equalized Image", WINDOW_AUTOSIZE);

imwrite( "../results/histogram_equalization/equalized_image.jpg", equalized_image );

imshow("Equalized Image", equalized_image);

display_histogram(equalized_histogram, "equalized_histogram");

waitKey(0);

return 0;

}

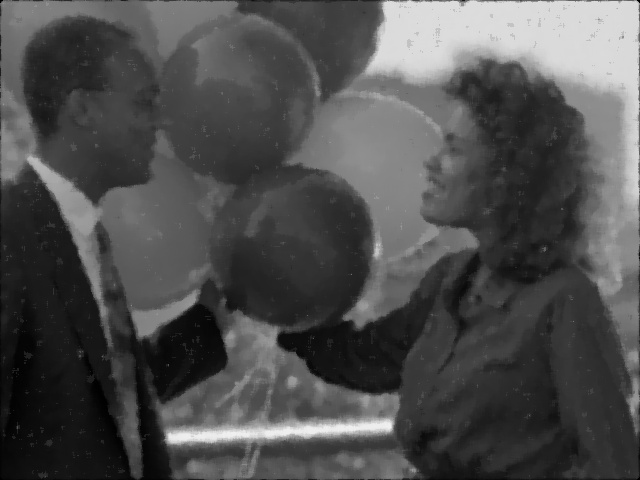

These filters are basically used for enhancing the images by removing the noise. This process can also be called as smoothing. Spatial filters take into account only the distance between neighbouring pixels and not the value of the pixels. These filters can either be linear (like Mean Filters, Gaussian Filters) or non-linear (like Median Filters). Linear filters/kernels are usually applied to an image using convolution. The results of application of spatial filters, with kernel size of 7 X 7, to noisy images are shown below.

An averaging kernel is convolved with the image. Although the noise has reduced, the resulting image is not good enough.

The gaussian kernel gives more importance to closer pixels than distant ones. When such a filter is convolved with images with salt and pepper noise, the results are not good.

This is a non linear filter, which takes into the account only the median of the neighbouring pixels. As seen below, this filter has almost perfectly restored the noisy image.

#include < iostream >

#include < opencv2/opencv.hpp >

#include < math.h >

#include < stdlib.h >

#define PI 3.14159

#define R_WEIGHT 0.2989

#define G_WEIGHT 0.5870

#define B_WEIGHT 0.1140

using namespace cv;

void swap(int & a1,int & a2)

{

a1 = a1 + a2;

a2 = a1 - a2;

a1 = a1 - a2;

}

void merge(int A[ ] , int start, int mid, int end) {

//stores the starting position of both parts in temporary variables.

int p = start ,q = mid+1;

int Arr[end-start+1] , k=0;

for(int i = start ;i <= end ;i++) {

if(p > mid) //checks if first part comes to an end or not .

Arr[ k++ ] = A[ q++] ;

else if ( q > end) //checks if second part comes to an end or not

Arr[ k++ ] = A[ p++ ];

else if( A[ p ] < A[ q ]) //checks which part has smaller element.

Arr[ k++ ] = A[ p++ ];

else

Arr[ k++ ] = A[ q++];

}

for (int p=0 ; p< k ;p ++) {

/* Now the real array has elements in sorted manner including both

parts.*/

A[ start++ ] = Arr[ p ] ;

}

}

void merge_sort (int A[ ] , int start , int end ) {

if( start < end ) {

int mid = (start + end ) / 2 ; // defines the current array in 2 parts .

merge_sort (A, start , mid ) ; // sort the 1st part of array .

merge_sort (A,mid+1 , end ) ; // sort the 2nd part of array.

// merge the both parts by comparing elements of both the parts.

merge(A,start , mid , end );

}

}

Mat convert_to_grayscale(const Mat& input_image)

{

Mat rgb_image = input_image.clone();

Mat grayscale_image = cv::Mat(rgb_image.rows, rgb_image.cols, CV_8UC1, cv::Scalar(0, 0, 0));

for(int j = 0; j < grayscale_image.rows; j++)

for(int i = 0; i < grayscale_image.cols; i++)

{

Vec3b rgb_value = rgb_image.at< Vec3b >(j, i);

grayscale_image.at< uchar >(j,i) = R_WEIGHT*rgb_value[0] + G_WEIGHT*rgb_value[1] + B_WEIGHT*rgb_value[2] ;

}

return grayscale_image;

}

Mat image_padding(const Mat& input_image, int offset)

{

Mat padded_image = Mat(input_image.rows+2*offset, input_image.cols+2*offset, CV_8UC1, 0.0);

for(int j = 0; j < input_image.rows ; j++)

for(int i = 0; i < input_image.cols; i++)

{

padded_image.at< uchar >(j+offset,i+offset) = input_image.at< uchar >(j,i);

}

return padded_image;

}

Mat image_depadding(const Mat& input_image, int offset)

{

Mat depadded_image = Mat(input_image.rows-2*offset, input_image.cols-2*offset, CV_8UC1, 0.0);

for(int j = 0; j < input_image.rows-2*offset ; j++)

for(int i = 0; i < input_image.cols-2*offset; i++)

{

depadded_image.at< uchar >(j,i) = input_image.at< uchar >(j+offset,i+offset);

}

return depadded_image;

}

Mat convolve(const Mat& input_image, const Mat& kernel)

{

int kernel_size = kernel.rows;

int offset;

if(kernel_size % 2 != 0)

{

offset = (kernel_size+1)/2 - 1;

}

else

{

offset = (kernel_size)/2 - 1;

}

Mat padded_image = image_padding(input_image, offset);

Mat flipped_kernel = Mat(kernel.rows, kernel.cols, CV_32F, 0.0);

for(int m = 0; m < kernel_size ; m++)

for(int n = 0; n < kernel_size; n++)

{

flipped_kernel.at< float >(m,n) = kernel.at< float >(kernel_size-m-1,kernel_size-n-1);

}

Mat convolved_image = Mat(padded_image.rows, padded_image.cols, CV_8UC1, 0.0);

float value = 0.0;

for(int j = offset; j < padded_image.rows - offset ; j++)

for(int i = offset; i < padded_image.cols - offset; i++)

{

for(int m = 0; m < kernel_size ; m++)

for(int n = 0; n < kernel_size; n++)

{

value += (padded_image.at< uchar >(j+m-offset,i+n-offset))*flipped_kernel.at< float >(m,n);

}

convolved_image.at< uchar >(j,i) = (int)value;

value = 0;

}

Mat depadded_image = image_depadding(convolved_image, offset);

return depadded_image;

}

Mat gaussian_filter(const Mat& input_image, int kernel_size, float sigma)

{

Mat kernel = Mat(kernel_size, kernel_size, CV_32F, 0.0);

int k;

if(kernel_size % 2 != 0)

{

k = (kernel_size-1)/2;

}

else

{

k = (kernel_size)/2;

}

float value = 0.0,value2 = 0.0;

// Create the Gaussian Kernel

for(int j = 0; j < kernel_size ; j++)

for(int i = 0; i < kernel_size; i++)

{

kernel.at< float >(j,i) = (float)( 1.0 /( 2.0*PI*pow(sigma,2) ) ) * exp(-1.0* ( pow(i+1-(k+1),2) + pow(j+1-(k+1),2) ) / ( 2.0 * pow(sigma,2) ) ) ;

value += kernel.at< float >(j,i);

}

// Normalize kernel , so that the sum of all elements in the kernel is 1

for(int j = 0; j < kernel_size ; j++)

for(int i = 0; i < kernel_size; i++)

{

kernel.at< float >(j,i) = kernel.at< float >(j,i)/value ;

}

Mat filtered_image = convolve(input_image, kernel);

return filtered_image;

}

Mat mean_filter(const Mat& input_image, int kernel_size)

{

Mat kernel = Mat(kernel_size, kernel_size, CV_32F, 0.0);

for(int j = 0; j < kernel_size ; j++)

for(int i = 0; i < kernel_size; i++)

{

kernel.at< float >(j,i) = ( 1.0 / pow(kernel_size,2)) ;

}

Mat filtered_image = convolve(input_image, kernel);

return filtered_image;

}

Mat median_filter(const Mat& input_image, int kernel_size)

{

int offset;

if(kernel_size % 2 != 0)

{

offset = (kernel_size+1)/2 - 1;

}

else

{

offset = (kernel_size)/2 - 1;

}

Mat padded_image = image_padding(input_image, offset);

Mat filtered_image = Mat(padded_image.rows, padded_image.cols, CV_8UC1, 0.0);

int size = kernel_size*kernel_size;

int neighborhood[size];

int k;

int mid ;

if(kernel_size % 2 != 0)

{

mid = (size+1)/2-1;

}

else

{

mid = (size)/2-1;

}

float value = 0.0;

for(int j = offset; j < padded_image.rows - offset ; j++)

for(int i = offset; i < padded_image.cols - offset; i++)

{

k = 0;

for(int m = 0; m < kernel_size ; m++)

for(int n = 0; n < kernel_size; n++)

{

neighborhood[k] = padded_image.at< uchar >(j+m-offset,i+n-offset) ;

k++;

}

merge_sort(neighborhood,0,size-1);

filtered_image.at< uchar >(j,i) = neighborhood[mid];

}

Mat depadded_image = image_depadding(filtered_image, offset);

return depadded_image;

}

int main(int argc, char** argv )

{

Mat image, grayscale_image, gaussian_filtered_image, mean_filtered_image, median_filtered_image;

image = imread( "../images/balloons.png", 1 );

if ( !image.data )

{

printf("No image data \n");

return -1;

}

grayscale_image = convert_to_grayscale(image);

gaussian_filtered_image = gaussian_filter(grayscale_image,7,1.4);

mean_filtered_image = mean_filter(grayscale_image,7);

median_filtered_image = median_filter(grayscale_image,7);

namedWindow("Original Image", WINDOW_AUTOSIZE);

imwrite( "../results/filter/grayscale_image.jpg", grayscale_image );

imshow("Original Image", grayscale_image);

namedWindow("Gaussian Filtered Image", WINDOW_AUTOSIZE);

imwrite( "../results/filter/gaussian_filtered_image.jpg", gaussian_filtered_image );

imshow("Gaussian Filtered Image", gaussian_filtered_image );

namedWindow("Mean Filtered Image", WINDOW_AUTOSIZE);

imwrite( "../results/filter/mean_filtered_image.jpg", mean_filtered_image );

imshow("Mean Filtered Image", mean_filtered_image);

namedWindow("Median Filtered Image2", WINDOW_AUTOSIZE);

imwrite( "../results/filter/median_filtered_image.jpg", median_filtered_image );

imshow("Median Filtered Image", median_filtered_image);

waitKey(0);

return 0;

}

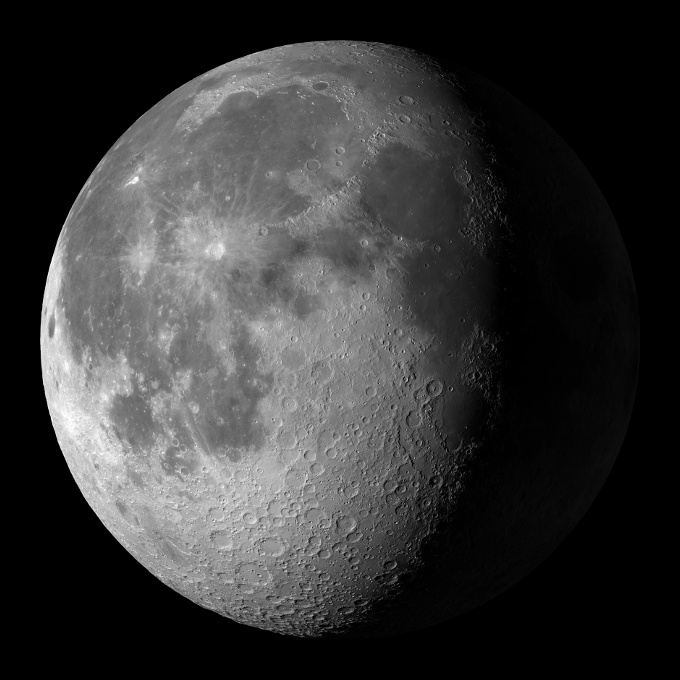

Sharpening is the process by which the edges of an image are enhanced. It is basically a 3 step process. The first step involves low pass filtering an image using either an averaging or gaussian filter. Then the resultant image is subtracted from the input image, which is equivalent to high pass filtering an image. A proportion of this high pass filtered image , as determined by alpha, is added to the input image. This results in a sharpened image.

#include < iostream >

#include < opencv2/opencv.hpp >

#include < math.h >

#include < stdlib.h >

#define PI 3.14159

#define R_WEIGHT 0.2989

#define G_WEIGHT 0.5870

#define B_WEIGHT 0.1140

using namespace cv;

Mat convert_to_grayscale(const Mat& input_image)

{

Mat rgb_image = input_image.clone();

Mat grayscale_image = cv::Mat(rgb_image.rows, rgb_image.cols, CV_8UC1, cv::Scalar(0, 0, 0));

for(int j = 0; j < grayscale_image.rows; j++)

for(int i = 0; i < grayscale_image.cols; i++)

{

Vec3b rgb_value = rgb_image.at< Vec3b >(j, i);

grayscale_image.at< uchar >(j,i) = R_WEIGHT*rgb_value[0] + G_WEIGHT*rgb_value[1] + B_WEIGHT*rgb_value[2] ;

}

return grayscale_image;

}

Mat sharpen(const Mat& input_image, int kernel_size, float alpha)

{

Mat low_pass_filtered_image, high_pass_filtered_image, sharpened_image;

low_pass_filtered_image = input_image.clone();

//Low pass filter the image using Gaussian

blur( input_image, low_pass_filtered_image, Size( kernel_size, kernel_size ));

high_pass_filtered_image = input_image.clone();

sharpened_image = input_image.clone();

for(int j = 0; j < input_image.rows; j++)

for(int i = 0; i < input_image.cols; i++)

{

//High pass filter the image by subtracting input_image from low pass filtered image and saturate the output

high_pass_filtered_image.at< uchar >(j,i) = saturate_cast< uchar >(input_image.at< uchar >(j,i) - low_pass_filtered_image.at< uchar >(j,i));

//Sharpen the image by doing weighted addition of the high pass filtered image to the input image and saturate the output

sharpened_image.at< uchar >(j,i) = saturate_cast< uchar >(input_image.at< uchar >(j,i) + alpha*high_pass_filtered_image.at< uchar >(j,i));

}

namedWindow("HPF Image", WINDOW_AUTOSIZE);

imwrite( "../results/sharpening/high_pass_filtered_image.jpg", high_pass_filtered_image);

imshow("HPF Image", high_pass_filtered_image);

namedWindow("LPF Image", WINDOW_AUTOSIZE);

imwrite( "../results/sharpening/low_pass_filtered_image.jpg", low_pass_filtered_image);

imshow("LPF Image", low_pass_filtered_image);

return sharpened_image;

}

int main(int argc, char** argv )

{

Mat image, grayscale_image, sharpened_image, sharpened_image2, sharpened_image3;

image = imread( "../images/moon.png", 1 );

if ( !image.data )

{

printf("No image data \n");

return -1;

}

grayscale_image = convert_to_grayscale(image);

sharpened_image = sharpen(grayscale_image,9,0.5);

namedWindow("Original Image", WINDOW_AUTOSIZE);

imwrite( "../results/sharpening/grayscale_image.jpg", grayscale_image );

imshow("Original Image", grayscale_image);

namedWindow("Sharpened Image", WINDOW_AUTOSIZE);

imwrite( "../results/sharpening/sharpened_image.jpg", sharpened_image );

imshow("Sharpened Image", sharpened_image);

waitKey(0);

return 0;

}

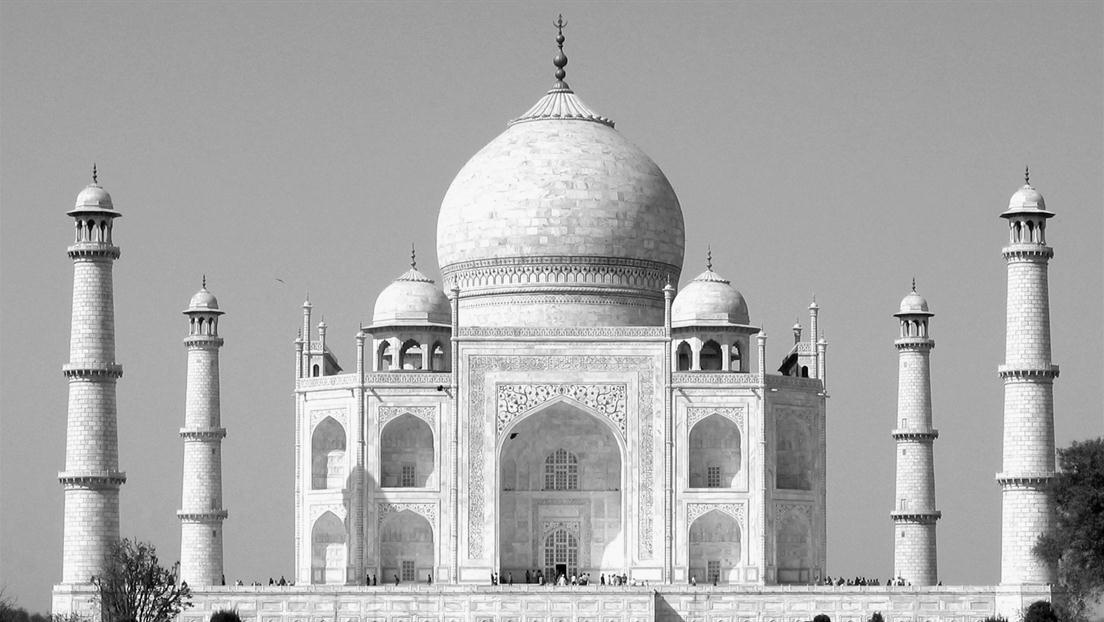

This is a modification of gaussian filter. This filter utilizes both the spatial difference and the pixel difference. Hence this filter would be able to remove noise while enhancing the edges. This filter is non-linear in nature due to additional range weight component that is muliplied with the gaussian/spatial weight. Hence computation cannot be done in the frequency domain. From the results below it can be seen that the Bilateral Filter outperforms Gaussian Filter. The bilateral filter smoothens the entire image except at the edges

#include < iostream >

#include < opencv2/opencv.hpp >

using namespace cv;

Mat image_padding(const Mat& input_image, int offset)

{

Mat padded_image = Mat(input_image.rows+2*offset, input_image.cols+2*offset, CV_8UC1, 0.0);

for(int j = 0; j < input_image.rows ; j++)

for(int i = 0; i < input_image.cols; i++)

{

padded_image.at< uchar >(j+offset,i+offset) = input_image.at< uchar >(j,i);

}

return padded_image;

}

Mat image_depadding(const Mat& input_image, int offset)

{

Mat depadded_image = Mat(input_image.rows-2*offset, input_image.cols-2*offset, CV_8UC1, 0.0);

for(int j = 0; j < input_image.rows-2*offset ; j++)

for(int i = 0; i < input_image.cols-2*offset; i++)

{

depadded_image.at< uchar >(j,i) = input_image.at< uchar >(j+offset,i+offset);

}

return depadded_image;

}

Mat bilateral_convolve(const Mat& input_image, const Mat& gaussian_kernel, float sigma_r)

{

int kernel_size = gaussian_kernel.rows;

int offset;

if(kernel_size % 2 != 0)

{

offset = (kernel_size+1)/2 - 1;

}

else

{

offset = (kernel_size)/2 - 1;

}

Mat padded_image = image_padding(input_image, offset);

Mat convolved_image = Mat(padded_image.rows, padded_image.cols, CV_8UC1, 0.0);

float value, range_weight, weight, cumulative_weight;

int pixel_intensity, neighboring_pixel_intensity ;

for(int j = offset; j < padded_image.rows - offset ; j++)

for(int i = offset; i < padded_image.cols - offset; i++)

{

int pixel_intensity = padded_image.at< uchar >(j,i);

cumulative_weight = 0.0;

value = 0.0;

for(int m = 0; m < kernel_size ; m++)

for(int n = 0; n < kernel_size; n++)

{

neighboring_pixel_intensity = padded_image.at< uchar >(j+m-offset,i+n-offset);

range_weight = (float) exp(-1.0* ( pow(pixel_intensity - neighboring_pixel_intensity,2) ) / ( 2.0 * pow(sigma_r,2) ) );

weight = gaussian_kernel.at< float >(m,n) * range_weight ;

value += neighboring_pixel_intensity*weight;

cumulative_weight += weight ;

//std::cout<(m,n)<(j,i) = (int) (value/cumulative_weight);

}

Mat depadded_image = image_depadding(convolved_image, offset);

return depadded_image;

}

Mat bilateral_filter(const Mat& input_image, int kernel_size, float sigma_g, float sigma_r)

{

Mat kernel = Mat(kernel_size, kernel_size, CV_32F, 0.0);

int k;

if(kernel_size % 2 != 0)

{

k = (kernel_size-1)/2;

}

else

{

k = (kernel_size)/2;

}

// Create the Gaussian Kernel

for(int j = 0; j < kernel_size ; j++)

for(int i = 0; i < kernel_size; i++)

{

kernel.at< float >(j,i) = (float) exp(-1.0* ( pow(i+1-(k+1),2) + pow(j+1-(k+1),2) ) / ( 2.0 * pow(sigma_g,2) ) ) ; // ( 1.0 /( 2.0*PI*pow(sigma_g,2) ) ) *

}

Mat filtered_image = bilateral_convolve(input_image, kernel, sigma_r);

return filtered_image;

}

int main(int argc, char** argv )

{

Mat image1,image2, grayscale_image1,grayscale_image2, bilaterally_filtered_image1,bilaterally_filtered_image2, gaussian_filtered_image1,gaussian_filtered_image2;

image1 = imread( "../images/bike.jpg", 1 );

image2 = imread( "../images/tajmahal.jpg", 1 );

if ( !image1.data || !image2.data )

{

printf("No image data \n");

return -1;

}

cvtColor( image1, grayscale_image1, CV_BGR2GRAY );

GaussianBlur(grayscale_image1, gaussian_filtered_image1, Size(7,7),0,0);

bilaterally_filtered_image1 = bilateral_filter(grayscale_image1,7,15,10);

cvtColor( image2, grayscale_image2, CV_BGR2GRAY );

GaussianBlur(grayscale_image2, gaussian_filtered_image2, Size(7,7),0,0);

bilaterally_filtered_image2 = bilateral_filter(grayscale_image2,7,15,10);

namedWindow("Original Image 1", WINDOW_AUTOSIZE);

imwrite( "../results/bilateral_filter/grayscale_image1.jpg", grayscale_image1 );

imshow("Original Image 1", grayscale_image1);

namedWindow("Bilaterally Filtered Image 1", WINDOW_AUTOSIZE);

imwrite( "../results/bilateral_filter/bilaterally_filtered_image1.jpg", bilaterally_filtered_image1 );

imshow("Bilaterally Filtered Image 1", bilaterally_filtered_image1 );

namedWindow("Gaussian Filtered Image 1", WINDOW_AUTOSIZE);

imwrite( "../results/bilateral_filter/gaussian_filtered_image1.jpg", gaussian_filtered_image1);

imshow("Gaussian Filtered Image 1", gaussian_filtered_image1);

namedWindow("Original Image 2", WINDOW_AUTOSIZE);

imwrite( "../results/bilateral_filter/grayscale_image2.jpg", grayscale_image2 );

imshow("Original Image 2", grayscale_image2);

namedWindow("Bilaterally Filtered Image 2", WINDOW_AUTOSIZE);

imwrite( "../results/bilateral_filter/bilaterally_filtered_image2.jpg", bilaterally_filtered_image2 );

imshow("Bilaterally Filtered Image 2", bilaterally_filtered_image2 );

namedWindow("Gaussian Filtered Image 2", WINDOW_AUTOSIZE);

imwrite( "../results/bilateral_filter/gaussian_filtered_image2.jpg", gaussian_filtered_image2 );

imshow("Gaussian Filtered Image 2", gaussian_filtered_image2 );

waitKey(0);

return 0;

}

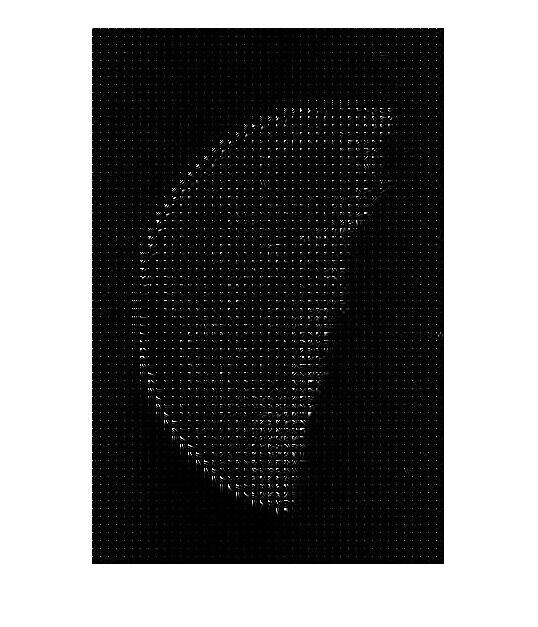

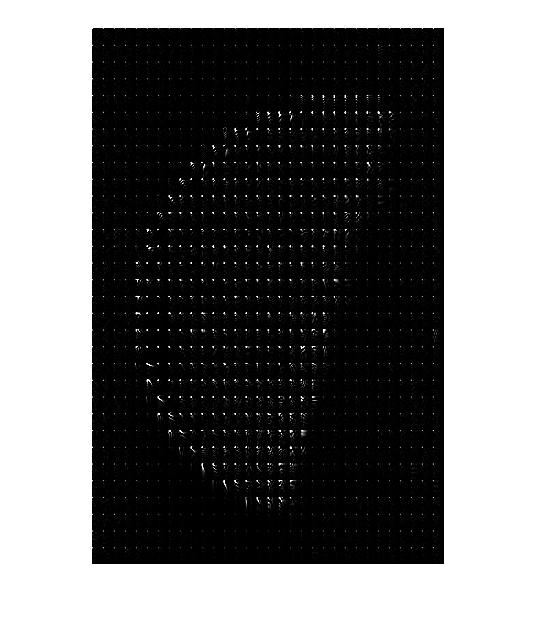

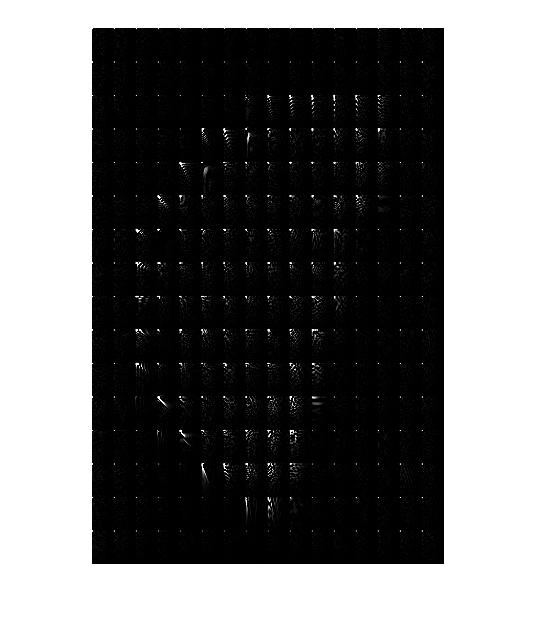

These are non-linear filters, that filter images based on shapes. Dilation computes the maximum value of all the pixels in the neighborhood. Erosion computes the minimum value of all the pixels in the neighborhood. Depending on whether the edges are black or white, both these operations may be used to make the edges thinner or thicker. In the images below, the black edges are made thicker by erosion and are made thinner by dilation. These kind of operations are useful in segmentation.

#include < iostream >

#include < opencv2/opencv.hpp >

using namespace cv;

void merge(int A[ ] , int start, int mid, int end) {

//stores the starting position of both parts in temporary variables.

int p = start ,q = mid+1;

int Arr[end-start+1] , k=0;

for(int i = start ;i <= end ;i++) {

if(p > mid) //checks if first part comes to an end or not .

Arr[ k++ ] = A[ q++] ;

else if ( q > end) //checks if second part comes to an end or not

Arr[ k++ ] = A[ p++ ];

else if( A[ p ] < A[ q ]) //checks which part has smaller element.

Arr[ k++ ] = A[ p++ ];

else

Arr[ k++ ] = A[ q++];

}

for (int p=0 ; p< k ;p ++) {

/* Now the real array has elements in sorted manner including both

parts.*/

A[ start++ ] = Arr[ p ] ;

}

}

void merge_sort (int A[ ] , int start , int end ) {

if( start < end ) {

int mid = (start + end ) / 2 ; // defines the current array in 2 parts .

merge_sort (A, start , mid ) ; // sort the 1st part of array .

merge_sort (A,mid+1 , end ) ; // sort the 2nd part of array.

// merge the both parts by comparing elements of both the parts.

merge(A,start , mid , end );

}

}

Mat image_padding(const Mat& input_image, int offset)

{

Mat padded_image = Mat(input_image.rows+2*offset, input_image.cols+2*offset, CV_8UC1, 0.0);

for(int j = 0; j < input_image.rows ; j++)

for(int i = 0; i < input_image.cols; i++)

{

padded_image.at< uchar >(j+offset,i+offset) = input_image.at< uchar >(j,i);

}

return padded_image;

}

Mat image_depadding(const Mat& input_image, int offset)

{

Mat depadded_image = Mat(input_image.rows-2*offset, input_image.cols-2*offset, CV_8UC1, 0.0);

for(int j = 0; j < input_image.rows-2*offset ; j++)

for(int i = 0; i < input_image.cols-2*offset; i++)

{

depadded_image.at< uchar >(j,i) = input_image.at< uchar >(j+offset,i+offset);

}

return depadded_image;

}

Mat morphological_filter(const Mat& input_image, int kernel_size,std::string type )

{

int offset;

if(kernel_size % 2 != 0)

{

offset = (kernel_size+1)/2 - 1;

}

else

{

offset = (kernel_size)/2 - 1;

}

Mat padded_image = image_padding(input_image, offset);

Mat filtered_image = Mat(padded_image.rows, padded_image.cols, CV_8UC1, 0.0);

int size = kernel_size*kernel_size;

int neighborhood[size];

int k;

float value = 0.0;

for(int j = offset; j < padded_image.rows - offset ; j++)

for(int i = offset; i < padded_image.cols - offset; i++)

{

k = 0;

for(int m = 0; m < kernel_size ; m++)

for(int n = 0; n < kernel_size; n++)

{

neighborhood[k] = padded_image.at< uchar >(j+m-offset,i+n-offset) ;

k++;

}

merge_sort(neighborhood,0,size-1);

if(type == "erosion")

filtered_image.at< uchar >(j,i) = neighborhood[0];

else if(type == "dilation")

filtered_image.at< uchar >(j,i) = neighborhood[k-1];

}

Mat depadded_image = image_depadding(filtered_image, offset);

return depadded_image;

}

int main(int argc, char** argv )

{

Mat image1, grayscale_image1, eroded_image1, dilated_image1,image2, grayscale_image2, eroded_image2, dilated_image2;

image1 = imread( "../images/letter_a.jpg", 1 );

image2 = imread( "../images/fingerprint.png", 1 );

if ( !image1.data || !image2.data )

{

printf("No image data \n");

return -1;

}

cvtColor( image1, grayscale_image1, CV_BGR2GRAY );

eroded_image1 = morphological_filter(grayscale_image1,3,"erosion");

dilated_image1 = morphological_filter(grayscale_image1,3,"dilation");

cvtColor( image2, grayscale_image2, CV_BGR2GRAY );

eroded_image2 = morphological_filter(grayscale_image2,3,"erosion");

dilated_image2 = morphological_filter(grayscale_image2,3,"dilation");

namedWindow("Original Image 1", WINDOW_AUTOSIZE);

imwrite( "../results/morphological_filter/grayscale_image1.jpg", grayscale_image1 );

imshow("Original Image 1", grayscale_image1);

namedWindow("Eroded Image 1", WINDOW_AUTOSIZE);

imwrite( "../results/morphological_filter/eroded_image1.jpg", eroded_image1 );

imshow("Eroded Image 1", eroded_image1 );

namedWindow("Dilated Image 1", WINDOW_AUTOSIZE);

imwrite( "../results/morphological_filter/dilated_image1.jpg", dilated_image1 );

imshow("Dilated Image 1", dilated_image1);

namedWindow("Original Image 2", WINDOW_AUTOSIZE);

imwrite( "../results/morphological_filter/grayscale_image2.jpg", grayscale_image2 );

imshow("Original Image 2", grayscale_image2);

namedWindow("Eroded Image 2", WINDOW_AUTOSIZE);

imwrite( "../results/morphological_filter/eroded_image2.jpg", eroded_image2 );

imshow("Eroded Image 2", eroded_image2 );

namedWindow("Dilated Image 2", WINDOW_AUTOSIZE);

imwrite( "../results/morphological_filter/dilated_image2.jpg", dilated_image2 );

imshow("Dilated Image 2", dilated_image2 );

waitKey(0);

return 0;

}

Provided below are some images from which the type of noise in it is analyzed and a suitable filtering technique is chosen and applied to remove the noise.

The above image is very bright. In order to make the features visible, the contrast has to be enhanced. This can be done by equalizing its histogram so that the distribution of intensities of the image is spread across the spectrum rather than being concentrated at a particular intensity

The above image is very dark and similiar to the previous example, the contrast has to be enhanced by histogram equalization.

The equalized image is a bit noisy and has small/continous perturbations. A gaussian filter can be applied to the above image in order smoothen the image and reduce the noise

The noise in the above image can be classified as salt and pepper noise. This kind of noise is characterized by random sprinkle of extreme intensities across the image. In order to remove such noise, a non-linear filter like Median filter is used,

Source : http://cnx.org/resources/4181ddf62e3a2047d36357f16180ce247b094532/plane_noise1.png

The above image has salt and pepper noise as well. A median filter can remove it easily.

Source : en.wikipedia.org/wiki/File:Phase_correlation.png

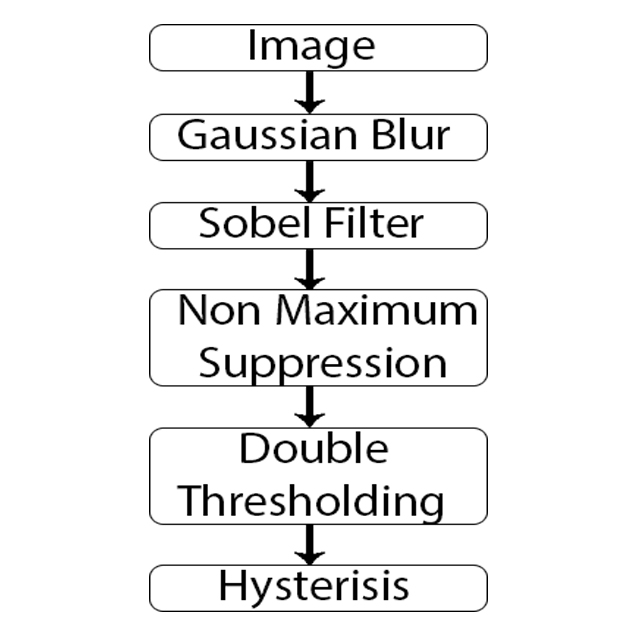

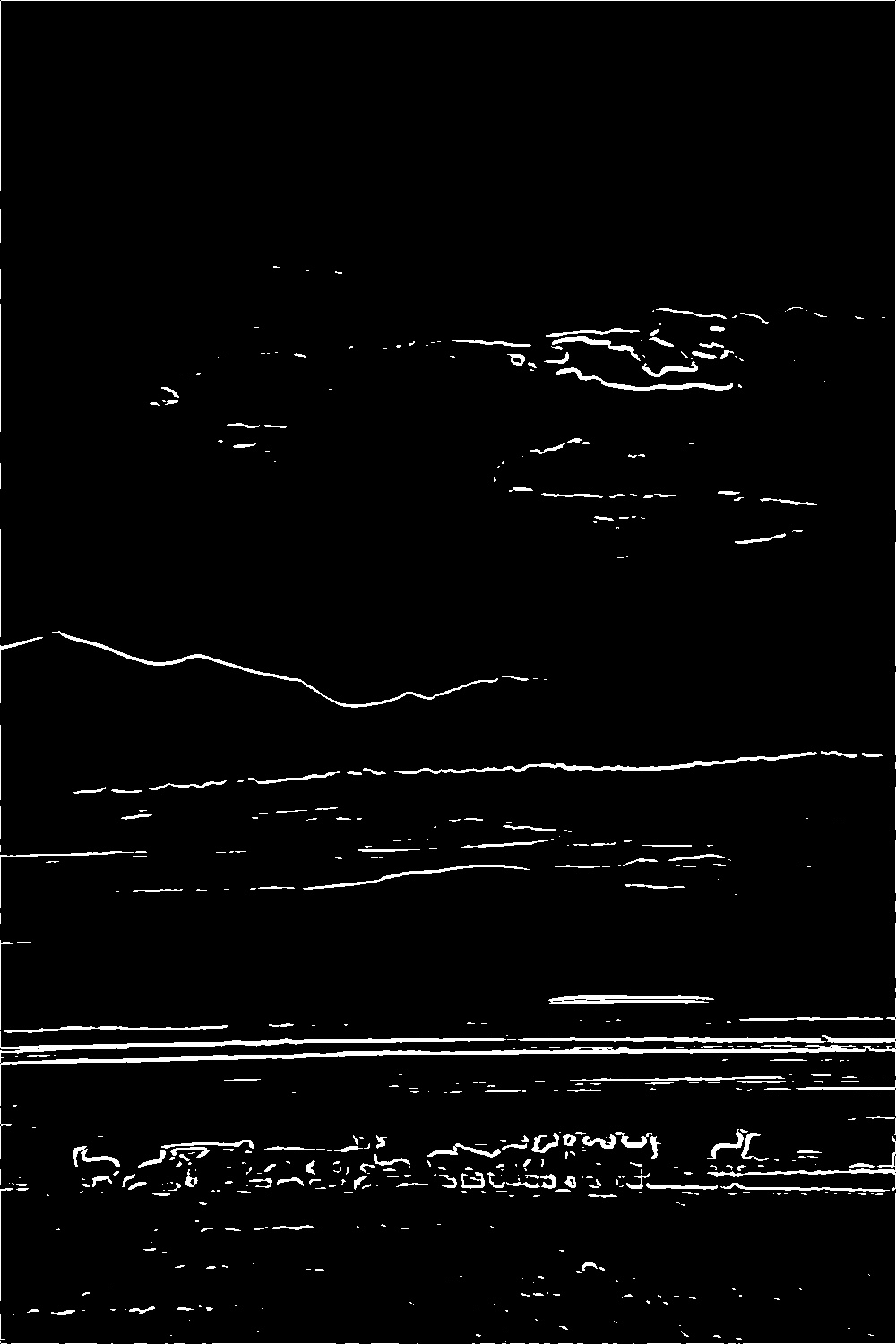

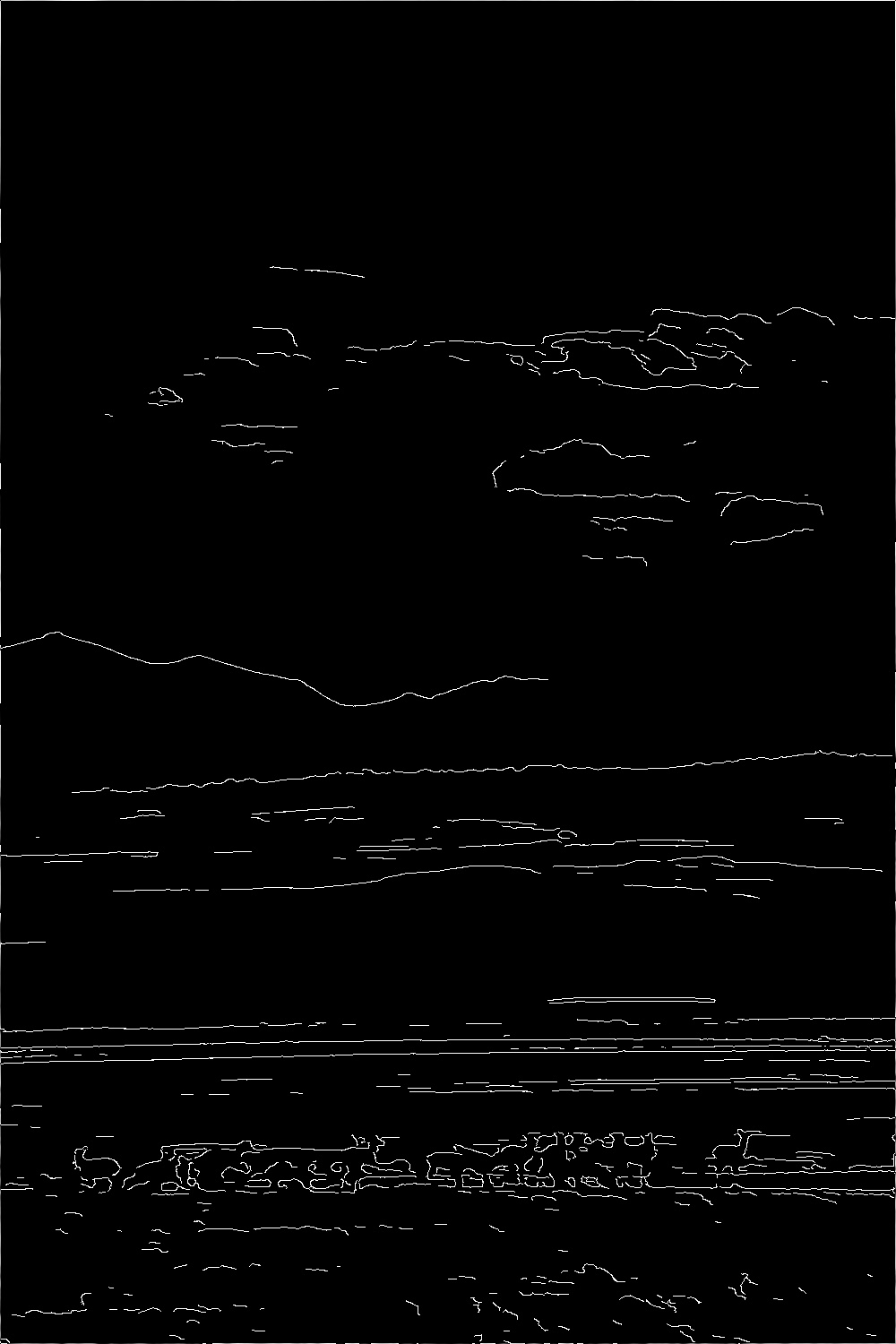

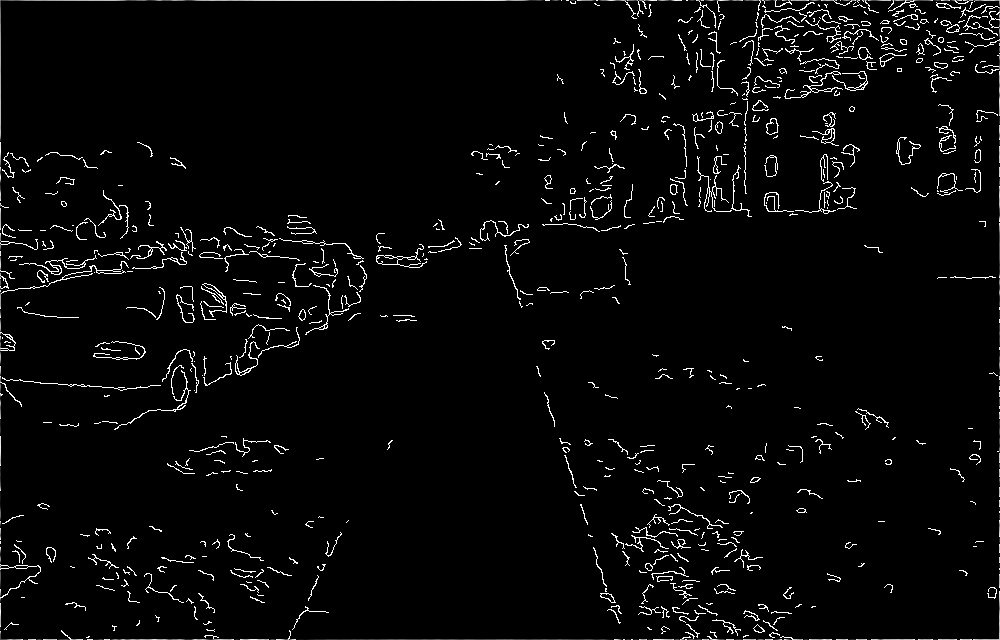

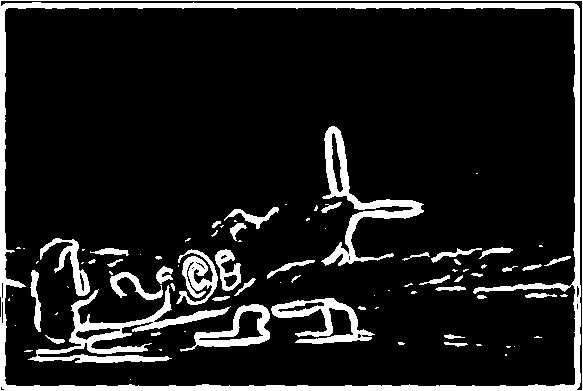

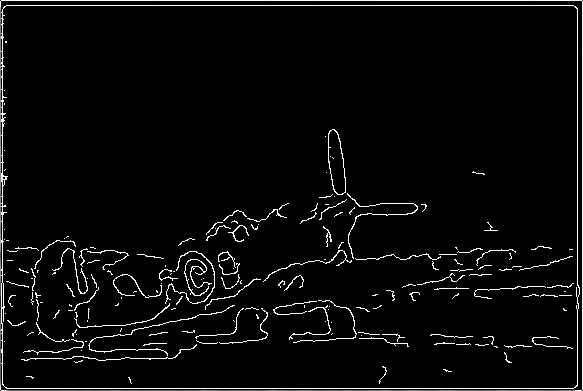

This is a multi-step edge detection algorithm that typically uses and optimizes other edge detection operators such as Prewitt, Robert and Sobel. First the input image is smoothened with a gaussian filter. Then an edge detection operator is applied to the image and the intensity gradient and direction is computed. Based on the gradient and its direction non maximum suppression is applied to make the edges thinner. Strong and weak edges from the resulting image are identified by double thresholding it. The weak edges are then tracked to see if its connected to other strong edges, in which case the weak edge will now be considered as strong edge or will be eliminated otherwise. When comparing this method of edge detection to the traditional edge detection operator's like Sobel, it can be seen from the examples below that the Canny edge detector gives out thinner edges and lesser false edges than the Sobel edge detector.

#include < iostream >

#include < opencv2/opencv.hpp >

#include < math.h >

#include < string >

#define PI 3.14159

#define THRESHOLD 35

#define INTERPOLATION true

#define STRONG_EDGE 2

#define WEAK_EDGE 1

#define NOT_EDGE 0

#define THRESHOLD_RATIO_H 0.24

#define THRESHOLD_RATIO_L 0.25

using namespace cv;

Mat image_padding(const Mat& input_image, int offset)

{

Mat padded_image = Mat(input_image.rows+2*offset, input_image.cols+2*offset, CV_32F, 0.0);

for(int j = 0; j < input_image.rows ; j++)

for(int i = 0; i < input_image.cols; i++)

{

padded_image.at< float >(j+offset,i+offset) = input_image.at< uchar >(j,i);

}

return padded_image;

}

Mat image_depadding(const Mat& input_image, int offset)

{

Mat depadded_image = Mat(input_image.rows-2*offset, input_image.cols-2*offset, CV_32F, 0.0);

for(int j = 0; j < input_image.rows-2*offset ; j++)

for(int i = 0; i < input_image.cols-2*offset; i++)

{

depadded_image.at< float >(j,i) = input_image.at< float >(j+offset,i+offset);

}

return depadded_image;

}

Mat convolve(const Mat& input_image, const Mat& kernel)

{

int kernel_size = kernel.rows;

int offset;

if(kernel_size % 2 != 0)

{

offset = (kernel_size+1)/2 - 1;

}

else

{

offset = (kernel_size)/2 - 1;

}

Mat padded_image = image_padding(input_image, offset);

Mat flipped_kernel = Mat(kernel.rows, kernel.cols, CV_32F, 0.0);

for(int m = 0; m < kernel_size ; m++)

for(int n = 0; n < kernel_size; n++)

{

flipped_kernel.at< float >(m,n) = kernel.at< float >(kernel_size-m-1,kernel_size-n-1);

}

Mat convolved_image = Mat(padded_image.rows, padded_image.cols, CV_32F, 0.0);

float value = 0.0;

for(int j = offset; j < padded_image.rows - offset ; j++)

for(int i = offset; i < padded_image.cols - offset; i++)

{

for(int m = 0; m < kernel_size ; m++)

for(int n = 0; n < kernel_size; n++)

{

value += (padded_image.at< float >(j+m-offset,i+n-offset))*flipped_kernel.at< float >(m,n);

}

convolved_image.at< float >(j,i) = value;

value = 0;

}

Mat depadded_image = image_depadding(convolved_image, offset);

return depadded_image;

}

Mat get_image_gradient(const Mat& input_image, const Mat& horizontal_image, const Mat& vertical_image)

{

Mat filtered_image = Mat(input_image.rows, input_image.cols, CV_8UC1, 0.0);

for(int j = 0; j < input_image.rows ; j++)

for(int i = 0; i < input_image.cols; i++)

{

filtered_image.at< uchar >(j,i) = (( sqrt( pow(horizontal_image.at< float >(j,i),2) + pow(vertical_image.at< float >(j,i),2)))) ;

}

return filtered_image;

}

Mat get_image_gradient_direction(const Mat& input_image, const Mat& horizontal_image, const Mat& vertical_image)

{

Mat filtered_image = Mat(input_image.rows, input_image.cols, CV_32F, 0.0);

for(int j = 0; j < input_image.rows ; j++)

for(int i = 0; i < input_image.cols; i++)

{

filtered_image.at< float >(j,i) =(float)(atan2( vertical_image.at< float >(j,i), horizontal_image.at< float >(j,i))*180/PI);

}

return filtered_image;

}

void threshold_image(const Mat& input_image, Mat& thresholded_image)

{

thresholded_image = input_image.clone();

for(int j = 0; j < input_image.rows; j++)

for(int i =0; i < input_image.cols; i++)

{

if (input_image.at< uchar >(j,i) >= THRESHOLD)

thresholded_image.at< uchar >(j,i) = 255;

else

thresholded_image.at< uchar >(j,i) = 0;

}

}

Mat non_maximum_suppression(const Mat& image_gradient,const Mat& gradient_direction, const Mat& horizontal_image, const Mat& vertical_image)

{

Mat nms_image = image_gradient.clone();

for(int j = 1; j < image_gradient.rows-1; j++)

for(int i =1; i < image_gradient.cols-1; i++)

{

float angle = gradient_direction.at< float >(j,i);

float top_elements[2];

float bottom_elements[2];

float ratio;

float current_gradient = image_gradient.at< uchar >(j,i) ;

float bottom_interpolation,top_interpolation;

if(INTERPOLATION)

{

if( (angle >= 0 && angle <= 45) || (angle < -135 && angle >= -180))

{

bottom_elements[0] = image_gradient.at< uchar >(j,i+1);

bottom_elements[1] = image_gradient.at< uchar >(j+1,i+1);

top_elements[0] = image_gradient.at< uchar >(j,i-1);

top_elements[1] = image_gradient.at< uchar >(j-1,i-1);

ratio = abs(vertical_image.at< float >(j,i)/current_gradient);

bottom_interpolation = (bottom_elements[1] - bottom_elements[0])*ratio +bottom_elements[0];

top_interpolation = (top_elements[1] - top_elements[0])*ratio +top_elements[0];

if(current_gradient < bottom_interpolation ||

current_gradient < top_interpolation )

{nms_image.at< uchar >(j,i) = 0;}

}

else if( (angle > 45 && angle <= 90) || (angle < -90 && angle >= -135))

{

bottom_elements[0] = image_gradient.at< uchar >(j+1,i);

bottom_elements[1] = image_gradient.at< uchar >(j+1,i+1);

top_elements[0] = image_gradient.at< uchar >(j-1,i);

top_elements[1] = image_gradient.at< uchar >(j-1,i-1);

ratio = abs(horizontal_image.at< float >(j,i)/current_gradient);

bottom_interpolation = (bottom_elements[1] - bottom_elements[0])*ratio +bottom_elements[0];

top_interpolation = (top_elements[1] - top_elements[0])*ratio +top_elements[0];

if(current_gradient < bottom_interpolation ||

current_gradient < top_interpolation )

{nms_image.at< uchar >(j,i) = 0; }

}

else if( (angle > 90 && angle <= 135) || (angle < -45 && angle >= -90))

{

bottom_elements[0] = image_gradient.at< uchar >(j+1,i);

bottom_elements[1] = image_gradient.at< uchar >(j+1,i-1);

top_elements[0] = image_gradient.at< uchar >(j-1,i);

top_elements[1] = image_gradient.at< uchar >(j-1,i+1);

ratio = abs(horizontal_image.at< float >(j,i)/current_gradient);

bottom_interpolation = (bottom_elements[1] - bottom_elements[0])*ratio +bottom_elements[0];

top_interpolation = (top_elements[1] - top_elements[0])*ratio +top_elements[0];

if(current_gradient < bottom_interpolation ||

current_gradient < top_interpolation )

{nms_image.at< uchar >(j,i) = 0; }

}

else if( (angle > 135 && angle <= 180) || (angle < 0 && angle >= -45))

{

bottom_elements[0] = image_gradient.at< uchar >(j,i-1);

bottom_elements[1] = image_gradient.at< uchar >(j+1,i-1);

top_elements[0] = image_gradient.at< uchar >(j,i+1);

top_elements[1] = image_gradient.at< uchar >(j-1,i+1);

ratio = abs(horizontal_image.at< float >(j,i)/current_gradient);

bottom_interpolation = (bottom_elements[1] - bottom_elements[0])*ratio +bottom_elements[0];

top_interpolation = (top_elements[1] - top_elements[0])*ratio +top_elements[0];

if(current_gradient < bottom_interpolation ||

current_gradient < top_interpolation )

{nms_image.at< uchar >(j,i) = 0; }

}

}

else {

if( (angle >= -22.5 && angle <= 22.5) || (angle < -157.5 && angle >= -180))

if(image_gradient.at< uchar >(j,i) < image_gradient.at< uchar >(j,i+1) ||

image_gradient.at< uchar >(j,i) < image_gradient.at< uchar >(j,i-1) )

{nms_image.at< uchar >(j,i) = 0; }

else if( (angle >= 22.5 && angle <= 67.5) || (angle < -112.5 && angle >= -157.5))

if(image_gradient.at< uchar >(j,i) < image_gradient.at< uchar >(j+1,i+1) ||

image_gradient.at< uchar >(j,i) < image_gradient.at< uchar >(j-1,i-1) )

{nms_image.at< uchar >(j,i) = 0; }

else if( (angle >= 67.5 && angle <= 112.5) || (angle < -67.5 && angle >= -112.5))

if(image_gradient.at< uchar >(j,i) < image_gradient.at< uchar >(j+1,i) ||

image_gradient.at< uchar >(j,i) < image_gradient.at< uchar >(j-1,i) )

{nms_image.at< uchar >(j,i) = 0; }

else if( (angle >= 112.5 && angle <= 157.5) || (angle < -22.5 && angle >= -67.5))

if(image_gradient.at< uchar >(j,i) < image_gradient.at< uchar >(j+1,i-1) ||

image_gradient.at< uchar >(j,i) < image_gradient.at< uchar >(j-1,i+1) )

{nms_image.at< uchar >(j,i) = 0; }

}

}

return nms_image;

}

bool check_for_connected_strong_edges(int arr[],int size)

{

for(int i = 0; i < size; i++)

{

if ( arr[i] == STRONG_EDGE )

return true;

}

return false;

}

Mat hysterisis(const Mat& input_image, int max_threshold, int min_threshold)

{

Mat double_thresholded_image = input_image.clone() ;

Mat edge_strength_image = input_image.clone();

int size = input_image.rows * input_image.cols;

float strong_edges_row[size];

int neighborhood[9];

int k = 0;

for(int j = 1; j < input_image.rows-1; j++)

for(int i =1; i < input_image.cols-1; i++)

{

if (input_image.at< uchar >(j,i) >= max_threshold)

edge_strength_image.at< uchar >(j,i) = STRONG_EDGE;

else if (input_image.at< uchar >(j,i) > min_threshold && input_image.at< uchar >(j,i) < max_threshold)

edge_strength_image.at< uchar >(j,i) = WEAK_EDGE ;

else

edge_strength_image.at< uchar >(j,i) = NOT_EDGE;

}

for(int j = 1; j < input_image.rows-1; j++)

for(int i =1; i < input_image.cols-1; i++)

{

if (edge_strength_image.at< uchar >(j,i) == WEAK_EDGE)

{

k = 0;

for(int m = -1; m < 2 ; m++)

for(int n = -1; n < 2; n++)

{

neighborhood[k] = edge_strength_image.at< uchar >(j+m,i+n) ;

k++;

}

if( check_for_connected_strong_edges( neighborhood, 9) )

{

double_thresholded_image.at< uchar >(j,i) = 255 ;

edge_strength_image.at< uchar >(j,i) = STRONG_EDGE;

}

else

{

double_thresholded_image.at< uchar >(j,i) = 0 ;

edge_strength_image.at< uchar >(j,i) = WEAK_EDGE;

}

}

else if (edge_strength_image.at< uchar >(j,i) == STRONG_EDGE)

double_thresholded_image.at< uchar >(j,i) = 255 ;

else

double_thresholded_image.at< uchar >(j,i) = 0 ;

}

return double_thresholded_image;

}

void sobel_filter(const Mat& input_image, Mat& image_gradient, Mat& image_gradient_direction, Mat& horizontal_image, Mat& vertical_image)

{

Mat horizontal_kernel = Mat(3, 3, CV_32F, 0.0);

Mat vertical_kernel = Mat(3, 3, CV_32F, 0.0);

Mat nms_image, double_thresholded_image;

horizontal_kernel.at< float >(0,0) = 1 ;

horizontal_kernel.at< float >(0,1) = 0 ;

horizontal_kernel.at< float >(0,2) = -1 ;

horizontal_kernel.at< float >(1,0) = 2 ;

horizontal_kernel.at< float >(1,1) = 0 ;

horizontal_kernel.at< float >(1,2) = -2 ;

horizontal_kernel.at< float >(2,0) = 1 ;

horizontal_kernel.at< float >(2,1) = 0 ;

horizontal_kernel.at< float >(2,2) = -1 ;

vertical_kernel.at< float >(0,0) = 1 ;

vertical_kernel.at< float >(0,1) = 2 ;

vertical_kernel.at< float >(0,2) = 1 ;

vertical_kernel.at< float >(1,0) = 0 ;

vertical_kernel.at< float >(1,1) = 0 ;

vertical_kernel.at< float >(1,2) = 0 ;

vertical_kernel.at< float >(2,0) = -1 ;

vertical_kernel.at< float >(2,1) = -2 ;

vertical_kernel.at< float >(2,2) = -1 ;

horizontal_image = convolve(input_image, horizontal_kernel);

vertical_image = convolve(input_image, vertical_kernel);

image_gradient = get_image_gradient(input_image,horizontal_image, vertical_image);

image_gradient_direction = get_image_gradient_direction(input_image, horizontal_image, vertical_image);

}

void canny_edge_detection(const Mat& input_image, int num)

{

Mat gaussian_filtered_image, image_gradient,nms_image, double_thresholded_image;

Mat gradient_direction, horizontal_image, vertical_image, sobel_filtered_image;

gaussian_filtered_image = input_image.clone();

GaussianBlur(gaussian_filtered_image,gaussian_filtered_image,Size(7,7), 1.4, 1.4, BORDER_DEFAULT);

sobel_filter(gaussian_filtered_image, image_gradient,gradient_direction, horizontal_image, vertical_image);

threshold_image(image_gradient,sobel_filtered_image);

nms_image = non_maximum_suppression(image_gradient,gradient_direction, horizontal_image, vertical_image) ;

double min, max;

cv::minMaxLoc(nms_image, &min, &max);

int max_threshold = max*THRESHOLD_RATIO_H;

int min_threshold = max_threshold*THRESHOLD_RATIO_L;

double_thresholded_image = hysterisis( nms_image, max_threshold, min_threshold);

std::ostringstream path1, path2 ;

path1 << "../results/canny_edge_detection/sobel_edge_detector" << num << ".jpg" ;

path2 << "../results/canny_edge_detection/canny_edge_detector" << num << ".jpg" ;

namedWindow("Image Gradient", WINDOW_AUTOSIZE);

imwrite( path1.str(), image_gradient );

imshow("Sobel Image Gradient", image_gradient);

namedWindow("Sobel Filter ", WINDOW_AUTOSIZE);

imwrite( path1.str(), sobel_filtered_image );

imshow("Sobel Filter ", sobel_filtered_image);

namedWindow("Non Maximum Suppression", WINDOW_AUTOSIZE);

imwrite( "../results/edge_detection/nms_image"+num+".jpg", nms_image );

imshow("Non Maximum Suppression", nms_image);

namedWindow("Hysterisis", WINDOW_AUTOSIZE);

imwrite(path2.str(), double_thresholded_image );

imshow("Hysterisis", double_thresholded_image);

}

int main(int argc, char** argv )

{

Mat grayscale_image1,grayscale_image2,grayscale_image3,grayscale_image4,grayscale_image5;

Mat image1 = imread( "../results/filter/equalized_image_1.jpg", 1 );

Mat image2 = imread( "../results/filter/gaussian_filtered_image_2.jpg", 1 );

Mat image3 = imread( "../results/filter/median_filtered_image_3.jpg", 1 );

//http://cnx.org/resources/4181ddf62e3a2047d36357f16180ce247b094532/plane_noise1.png

Mat image4 = imread( "../results/filter/median_filtered_image_4.jpg", 1 );

//en.wikipedia.org/wiki/File:Phase_correlation.png

Mat image5 = imread( "../results/filter/gaussian_filtered_image_5.jpg", 1 );

cv::Mat resized_image2 = cv::Mat(640, 1000, CV_8UC1, cv::Scalar(0, 0, 0)) ;

resize(image2,resized_image2,resized_image2.size(),0,0);

imwrite( "../results/canny_edge_detection/equalized_image2.jpg", resized_image2 );

if ( !image1.data || !resized_image2.data || !image3.data || !image4.data || !image5.data)

{

printf("No image data \n");

return -1;

}

cvtColor( image1, grayscale_image1, CV_BGR2GRAY );

cvtColor( resized_image2, grayscale_image2, CV_BGR2GRAY );

cvtColor( image3, grayscale_image3, CV_BGR2GRAY );

cvtColor( image4, grayscale_image4, CV_BGR2GRAY );

cvtColor( image5, grayscale_image5, CV_BGR2GRAY );

canny_edge_detection(grayscale_image1,1);

canny_edge_detection(grayscale_image2,2);

canny_edge_detection(grayscale_image3,3);

canny_edge_detection(grayscale_image4,4);

canny_edge_detection(grayscale_image5,5);

waitKey(0);

return 0;

}

Inverse filter is an image restoration technique that is used to deconvolve the point spread function of an image (i.e. To deblur an image). The blurred image is first converted to frequency domain using Fourier Transform and then the frequency domain point spread function is inverted and multiplied element wise with the blurred image in the frequency domain. However in the presence of spectral nulls, the values of the deconvolved image can go to infinity.In order to avoid this Pseudo-Inverse filters are used. In pseudo-inverse filters, the inverted point-spread function is applied only if the value is above a certain threshold. These filters however do not work well in the presence of noise. Results of my implementation of these filters are shown below.

Unlike Inverse Filters, Wiener Filters not only remove the blurring effect but also try to smoothen the noise present in the image. In addition to the point spread functions, it makes use of the signal to noise ratio to remove noise from the image. When you compare the images below with the pseudo-inverse filter versions, it can be seen that performance would be better. However it hasn't completely restored the image. It might need some more tweaking of my implementation of these filters.

#include < iostream >

#include < opencv2/opencv.hpp >

#include < math.h >

using namespace cv;

#define STD_DEV 50

#define KERNEL_SIZE 17

#define SIGMA 3

#define PI 3.14159

#define THRESHOLD 0.2

Mat image_padding(const Mat& input_image)

{

Mat padded_image ;

int rows = getOptimalDFTSize(input_image.rows);

int cols = getOptimalDFTSize(input_image.cols);

copyMakeBorder(input_image, padded_image, 0, rows - input_image.rows, 0, cols - input_image.cols, BORDER_CONSTANT, Scalar::all(0));

return padded_image;

}

Mat add_noise(const Mat& input_image, int std_dev)

{

Mat noisy_image(input_image.rows,input_image.cols,CV_8U);

Mat noise(input_image.rows,input_image.cols,CV_32F);

randn(noise,Scalar::all(0),Scalar::all(STD_DEV));

noise.convertTo(noisy_image,CV_8U);

noisy_image += input_image.clone();

return noisy_image;

}

Mat pad_kernel(cv::Size size,const Mat& kernel, int kernel_size)

{

Mat padded_kernel = Mat::zeros(size, CV_32F);

for(int j = 0; j < kernel_size ; j++)

for(int i = 0; i < kernel_size; i++)

{

padded_kernel.at< float >(j,i) = kernel.at< float >(j,i);

}

return padded_kernel;

}

Mat get_gaussian_blur_kernel(int kernel_size, float sigma)

{

Mat kernel = Mat(kernel_size, kernel_size, CV_32F, 0.0);

int k;

if(kernel_size % 2 != 0)

{

k = (kernel_size-1)/2;

}

else

{

k = (kernel_size)/2;

}

float value = 0.0;

// Create the Gaussian Kernel

for(int j = 0; j < kernel_size ; j++)

for(int i = 0; i < kernel_size; i++)

{

kernel.at< float >(j,i) = (float)( 1.0 /( 2.0*PI*pow(sigma,2) ) ) * exp(-1.0* ( pow(i+1-(k+1),2) + pow(j+1-(k+1),2) ) / ( 2.0 * pow(sigma,2) ) ) ;

value += kernel.at< float >(j,i);

}

// Normalize kernel , so that the sum of all elements in the kernel is 1

for(int j = 0; j < kernel_size ; j++)

for(int i = 0; i < kernel_size; i++)

{

kernel.at< float >(j,i) = kernel.at< float >(j,i)/value ;

}

return kernel;

}

Mat get_motion_blur_kernel(int kernel_size)

{

Mat kernel = Mat::zeros(kernel_size, kernel_size, CV_32F);

int j = kernel_size/2;

for(int i = 0; i < kernel_size; i++)

{

kernel.at< float >(j,i) = 1.0/kernel_size ;

}

return kernel;

}

Mat fourier_transform(const Mat& padded_image)

{

Mat image;

padded_image.convertTo(image,CV_32F);

//Real part and imaginary part

Mat images[] = {Mat_< float >(image), Mat::zeros(image.size(), CV_32F)};

Mat complex_image;

merge(images, 2, complex_image);

dft(complex_image,complex_image);

return complex_image;

}

Mat power_spectrum(const Mat& input_image)

{

Mat complex_image = fourier_transform(input_image);

Mat images[2],image_magnitude,spectrum_image;

split(complex_image, images);

magnitude(images[0],images[1],image_magnitude);

multiply(image_magnitude,image_magnitude,spectrum_image);

return spectrum_image;

}

Mat wiener_filter(const Mat& noisy_image, const Mat& signal_spectrum, Mat kernel, int kernel_size)

{

Mat noise(noisy_image.rows,noisy_image.cols,CV_8U);

randn(noise,Scalar::all(0),Scalar::all(STD_DEV));

Scalar mean_input_image = mean(noisy_image);

Mat noise_spectrum = power_spectrum(noise);

Mat images[2], kernel_images[2];

Mat complex_image = fourier_transform(noisy_image);

split(complex_image,images);

Mat padded_kernel = pad_kernel(noisy_image.size(),kernel,kernel_size);

Mat kernel_spectrum = power_spectrum(padded_kernel);

Mat kernel_spectrum_squared;

Mat kernel_complex_image = fourier_transform(padded_kernel);

split(kernel_complex_image,kernel_images);

multiply(kernel_spectrum,kernel_spectrum,kernel_spectrum_squared);

Mat inv_snr = noise_spectrum/signal_spectrum;

Mat weight = Mat::zeros(noisy_image.size(), CV_32F) ;

for(int j = 0; j < noisy_image.rows ; j++)

for(int i = 0; i < noisy_image.cols; i++)

{

if( kernel_spectrum.at< float >(j,i) > THRESHOLD)

weight.at< float >(j,i) = ((kernel_spectrum_squared.at< float >(j,i))/(kernel_spectrum_squared.at< float >(j,i) + inv_snr.at< float >(j,i)))/kernel_spectrum.at< float >(j,i) ;

else

weight.at< float >(j,i) = 0.0;

}

multiply(images[0],weight,images[0]);

multiply(images[1],weight,images[1]);

merge(images,2,complex_image);

idft(complex_image,complex_image);

split(complex_image,images);

Scalar mean_restored_image = mean(images[0]);

double scale_factor = mean_input_image.val[0]/mean_restored_image.val[0];

multiply(images[0],scale_factor,images[0]);

Mat normalized_image ;

images[0].convertTo(normalized_image,CV_8UC1);

return normalized_image;

}

Mat inverse_filter(const Mat& noisy_image, Mat kernel, int kernel_size, bool pseudo_inverse = false)

{

Mat noise(noisy_image.rows,noisy_image.cols,CV_8U);

randn(noise,Scalar::all(0),Scalar::all(STD_DEV));

Scalar mean_input_image = mean(noisy_image);

Mat images[2], kernel_images[2];

Mat complex_image = fourier_transform(noisy_image);

split(complex_image,images);

Mat padded_kernel = pad_kernel(noisy_image.size(),kernel,kernel_size);

Mat kernel_spectrum = power_spectrum(padded_kernel);

Mat kernel_spectrum_squared;

Mat kernel_complex_image = fourier_transform(padded_kernel);

split(kernel_complex_image,kernel_images);

multiply(kernel_spectrum,kernel_spectrum,kernel_spectrum_squared);

Mat weight = Mat::zeros(noisy_image.size(), CV_32F) ;

for(int j = 0; j < noisy_image.rows ; j++)

for(int i = 0; i < noisy_image.cols; i++)

{

if (pseudo_inverse)

{

if( kernel_spectrum.at< float >(j,i) > THRESHOLD)

weight.at< float >(j,i) = (1/kernel_spectrum.at< float >(j,i)) ;

else

weight.at< float >(j,i) = 0.0;

}

else

{

weight.at< float >(j,i) = (1/kernel_spectrum.at< float >(j,i)) ;

}

}

multiply(images[0],weight,images[0]);

multiply(images[1],weight,images[1]);

merge(images,2,complex_image);

idft(complex_image,complex_image);

split(complex_image,images);

Scalar mean_restored_image = mean(images[0]);

double scale_factor = mean_input_image.val[0]/mean_restored_image.val[0];

multiply(images[0],scale_factor,images[0]);

Mat normalized_image ;

images[0].convertTo(normalized_image,CV_8UC1);

return normalized_image;

}

int main(int argc, char** argv )

{

Mat input_image,sample_image,resized_sample_image,padded_image,noisy_gaussian_blurred_image,noisy_motion_blurred_image,gaussian_blurred_image,motion_blurred_image;

input_image = imread( "../images/horse.jpg", CV_LOAD_IMAGE_GRAYSCALE ) ;

sample_image = imread( "../images/lena.jpg", CV_LOAD_IMAGE_GRAYSCALE ) ;

if (!input_image.data)

{

printf("No image data \n");

return -1;

}

//GaussianBlur(input_image,input_image,Size(3,3),1.5,1.5);

padded_image = image_padding(input_image);

Mat motion_blur_kernel = get_motion_blur_kernel(KERNEL_SIZE);

Mat gaussian_blur_kernel = get_gaussian_blur_kernel(KERNEL_SIZE, SIGMA);

filter2D(padded_image,motion_blurred_image,-1,motion_blur_kernel, Point(-1,-1),0,BORDER_DEFAULT);

filter2D(padded_image,gaussian_blurred_image,-1,gaussian_blur_kernel, Point(-1,-1),0,BORDER_DEFAULT);

//GaussianBlur(padded_image,gaussian_blurred_image,Size(KERNEL_SIZE,KERNEL_SIZE),SIGMA,SIGMA);

noisy_gaussian_blurred_image =add_noise(gaussian_blurred_image,STD_DEV);

noisy_motion_blurred_image =add_noise(motion_blurred_image,STD_DEV);

resize(sample_image, resized_sample_image,padded_image.size());

Mat sample_image_power_spectrum = power_spectrum(resized_sample_image);

Mat restored_image2 = wiener_filter(noisy_motion_blurred_image,sample_image_power_spectrum,gaussian_blur_kernel,KERNEL_SIZE);

Mat restored_image1 = wiener_filter(noisy_gaussian_blurred_image,sample_image_power_spectrum,motion_blur_kernel,KERNEL_SIZE);

Mat restored_image3 = inverse_filter(gaussian_blurred_image,gaussian_blur_kernel,KERNEL_SIZE);

Mat restored_image4 = inverse_filter(motion_blurred_image,motion_blur_kernel,KERNEL_SIZE);

Mat restored_image5 = inverse_filter(gaussian_blurred_image,gaussian_blur_kernel,KERNEL_SIZE, true);

Mat restored_image6 = inverse_filter(motion_blurred_image,motion_blur_kernel,KERNEL_SIZE, true);

Mat restored_image7 = inverse_filter(noisy_gaussian_blurred_image,gaussian_blur_kernel,KERNEL_SIZE, true);

Mat restored_image8 = inverse_filter(noisy_motion_blurred_image,motion_blur_kernel,KERNEL_SIZE, true);

namedWindow("Original Image", WINDOW_AUTOSIZE);

imwrite( "../results/wiener_filter/original_image.jpg", padded_image);

imshow("Original Image", padded_image);

namedWindow("Noisy Gaussian Blurred Image", WINDOW_AUTOSIZE);

imwrite( "../results/wiener_filter/noisy_gaussian_blurred_image.jpg", noisy_gaussian_blurred_image);

imshow("Noisy Gaussian Blurred Image", noisy_gaussian_blurred_image);

namedWindow("Noisy Motion Blurred Image", WINDOW_AUTOSIZE);

imwrite( "../results/wiener_filter/noisy_motion_blurred_image.jpg", noisy_motion_blurred_image);

imshow("Noisy Motion Blurred Image", noisy_motion_blurred_image);

namedWindow("Restored Gaussian Blurred Image", WINDOW_AUTOSIZE);

imwrite( "../results/wiener_filter/restored_gaussian_blurred_image.jpg", restored_image1);

imshow("Restored Gaussian Blurred Image", restored_image1);

namedWindow("Restored Motion Blurred Image", WINDOW_AUTOSIZE);

imwrite( "../results/wiener_filter/restored_motion_blurred_image.jpg", restored_image2);

imshow("Restored Motion Blurred Image", restored_image2);

namedWindow("Gaussian Blurred Image", WINDOW_AUTOSIZE);

imwrite( "../results/inverse_filter/gaussian_blurred_image.jpg", gaussian_blurred_image);

imshow("Gaussian Blurred Image", gaussian_blurred_image);

namedWindow("Motion Blurred Image", WINDOW_AUTOSIZE);

imwrite( "../results/inverse_filter/motion_blurred_image.jpg", motion_blurred_image);

imshow("Motion Blurred Image", motion_blurred_image);

namedWindow("Inverse Motion Blurred Image", WINDOW_AUTOSIZE);

imwrite( "../results/inverse_filter/inverse_motion_blurred_image.jpg", restored_image4);

imshow("Inverse Motion Blurred Image", restored_image4);

namedWindow("Inverse Gaussian Blurred Image", WINDOW_AUTOSIZE);

imwrite( "../results/inverse_filter/inverse_gaussian_blurred_image.jpg", restored_image3);

imshow("Inverse Gaussian Blurred Image", restored_image3);

namedWindow("Pseudo Inverse Motion Blurred Image", WINDOW_AUTOSIZE);

imwrite( "../results/inverse_filter/pseudo_inverse_motion_blurred_image.jpg", restored_image6);

imshow("Pseudo Inverse Motion Blurred Image", restored_image6);

namedWindow("Pseudo Inverse Gaussian Blurred Image", WINDOW_AUTOSIZE);

imwrite( "../results/inverse_filter/pseudo_inverse_gaussian_blurred_image.jpg", restored_image5);

imshow("Pseudo Inverse Gaussian Blurred Image", restored_image5);

namedWindow("Pseudo Inverse Noisy Motion Blurred Image", WINDOW_AUTOSIZE);

imwrite( "../results/inverse_filter/pseudo_inverse_noisy_motion_blurred_image.jpg", restored_image8);

imshow("Pseudo Inverse Noisy Motion Blurred Image", restored_image8);

namedWindow("Pseudo Inverse Noisy Gaussian Blurred Image", WINDOW_AUTOSIZE);

imwrite( "../results/inverse_filter/pseudo_inverse_noisy_gaussian_blurred_image.jpg", restored_image7);

imshow("Pseudo Inverse Noisy Gaussian Blurred Image", restored_image7);

waitKey(0);

return 0;

}

Run length encoding utilizes the property of image in which usually a number of consecutive pixels are identical. Hence it proves to be a good tool for compressing images. The pixel intensity along with number of consecutive occurances of the same pixel intensity are stored as pairs. The encoding pattern may be from left to right or zig-zag. However, if the image appears to have a huge variance without a lot of consecutive identical intensities, then this encoding technique rather than compressing the image would have expanded the size of the image.

#include < iostream >

using namespace std;

int main(int argc, char** argv )

{

int input_size = 25;

int a[input_size] = {1,1,1,2,2,3,4,4,4,4,4,4,4,5,5,5,5,3,2,2,2,2,2,2,3};

//int a[input_size] = {1,1,2,4,2,5,7,1,2,3,4,10,11,15,7,3,3,3,3,6,7,8,1,1};

int prev_val = 0;

int count = 0;

int output_size=0;

cout << endl << "Input" << endl << endl;

for(int i = 0 ; i< input_size; i++)

cout << a[i] << ",";

cout << endl << endl << "Output" << endl << endl

for(int i = 0; i< input_size; i++)

{

if(prev_val != a[i])

{

if(count != 0)

{

cout << count << " times - " << prev_val << endl;

output_size += 2;

}

count = 1;

}

else

{

++count;

}

prev_val = a[i];

}

cout << count << " times - " << prev_val << endl;

output_size += 2;

cout << endl << "Input Size = " << input_size << " , Output Size = " << output_size << endl;

cout << "Compression Ratio = " << (float)input_size/output_size << " : 1" << endl << endl;

}

Huffman encoding is a very efficient compression technique that allows variable bit representation based on frequency of intensities across an image. It utilizes the histogram of an image in-order to encode. The most frequent pixel intensity is represented by the smallest number of bits while the least frequent pixel intensity is represented by the largest number of bits. However the huffman code book needs to be stored as an overhead so that the decoder can understand.

#include < iostream >

#include < bits/stdc++.h >

#define MAX_ELEMENT 10

using namespace std;

string encoded_data[MAX_ELEMENT+1] = {""};

//Data type used for Priority Queue

class Node

{

int data;

unsigned int frequency;

public:

Node *left, *right;

Node(int _data, unsigned int _frequency)

{

data = _data;

frequency = _frequency;

}

int getData() const { return data; }

unsigned int getFrequency() const { return frequency; }

};

//Comparator used by Priority Queue for sorting

class FrequencyComparator

{

public:

int operator() (const Node* p1, const Node* p2)

{

return p1->getFrequency() > p2->getFrequency();

}

};

//Encode bits as a string

void encode(Node* root, string str)

{

if(root == NULL)

return;

if(root->getData() > -1)

encoded_data[root->getData()] = str;

encode(root->left, str+"0");

encode(root->right,str+"1");

}

//Implement Huffman Encoding

void huffman_encode(int frequency_table[], int size)

{

Node *left_node, *right_node, *top_node;

// Creates a Min heap of nodes (order by Frequency)

priority_queue, FrequencyComparator > MinHeap;

for (int i = 0; i < size; i++)

{

if(frequency_table[i] > 0)

MinHeap.push(new Node(i,frequency_table[i]) );

}

while(MinHeap.size() > 1)

{

left_node = MinHeap.top();

MinHeap.pop();

right_node = MinHeap.top();

MinHeap.pop();

// -1 denotes a special node containing frequencies of left and right nodes

top_node = new Node(-1, left_node->getFrequency() + right_node->getFrequency());

top_node->left = left_node;

top_node->right = right_node;

MinHeap.push(top_node);

}

encode(MinHeap.top(),"");

for(int i = 0; i < size;i++)

{

if(frequency_table[i] > 0)

cout << " Data = " << i << " , Huffman Code = " << encoded_data[i] << " , Frequency = " << frequency_table[i] << endl;

}

cout << endl;

}

int main ()

{

int input_size = 25;

int a[input_size] = {1,1,1,1,1,3,4,4,4,4,4,4,4,5,5,5,5,3,7,1,1,1,2,2,9};

int frequency_table[MAX_ELEMENT+1] = {0};

cout << endl << "Input" << endl << endl;

for(int i = 0 ; i < input_size; i++)

cout << a[i] << ",";

for(int i = 0; i < input_size; i++)

{

frequency_table[a[i]] += 1;

}

cout << endl << endl << "Output - Bit Representation" << endl;

huffman_encode(frequency_table, MAX_ELEMENT+1);

return 0;

}

From the given images flower.tif and flower1.tif , the blurring function was extracted in the frequency domain by dividing the two complex images of the blurred and the original image respectively. Using this blurring function in frequency domain and taking pseudo-inverse of this and multiplying it with the blurred image in frequency domain will restore it and then inverse dft can be performed to view it the spatial domain. However there might be a few artifacts due to loss in certain information. Based on the threshold level, the details in the restored image can vary. Alternatively, the blurred image was sharpened using unsharp mask technique. It looks slightly better than the inverse filter. With regards to the image caterpillar.jpg, I tried to guess the impulse function and tried various kernels like gaussian kernel, averaging kernel. However it didn't restore the image. In this case Blind Deconvolution has to be done by using a cost function by which various kernels and original images are guessed and the best ones are extracted. Due to lack of time , I was not able to implement this algorithm. Classical sharpening technique along with a median filter enhanced the image a bit.